Google simply modified how builders do analysis. On April 21, 2026, they launched Deep Analysis Max. It runs on Gemini 3.1 Professional and is not only one other chatbot improve. That is an autonomous AI analysis agent. It plans, searches, reads, causes, and writes, all from a single API name. By the tip, you get a totally cited report again.

In the event you construct AI apps, this information is for you. You’ll perceive the way it works, set it up, and run your first analysis job in the present day.

What’s Deep Analysis Max?

Deep Analysis Max operates as a analysis analyst that features by way of an software programming interface. Whenever you current a troublesome inquiry, the system creates a analysis technique that it makes use of to conduct on-line analysis and analyze your paperwork earlier than producing a referenced doc.

Google launched Deep Analysis in December 2025 with primary summarization and restricted capabilities, missing visuals, exterior integrations, and entry to personal information. The April 2026 model marks a serious improve.

Deep Analysis Max runs on Gemini 3.1 Professional, scoring 77.1% on ARC-AGI-2, over twice Gemini 3 Professional’s efficiency, and provides autonomous analysis to its reasoning talents.

What’s the distinction between Deep Analysis and Deep Analysis Max?

Google shipped two brokers with Deep Analysis, not one. Your workflow wants an evaluation as a result of it requires the number of the suitable agent.

- The usual Deep Analysis agent (

deep-research-preview-04-2026) is constructed for velocity. The mannequin achieves quicker outcomes by looking out fewer queries and processing fewer tokens. The system operates when customers sit on the distant viewing location. Interactive dashboards, chat interfaces, and fast lookups show its utilization. - Deep Analysis Max (

deep-research-max-preview-04-2026) is constructed for depth. The system operates repeatedly by way of test-time computation till it produces an exhaustive report. Use it for background jobs. The system handles work that requires analysis throughout the night time and evaluation of aggressive market circumstances and literature analysis.

Right here is the comparability that issues:

| Characteristic | Deep Analysis | Deep Analysis Max |

|---|---|---|

| Optimized for | Velocity and low latency | Most depth and comprehensiveness |

| Greatest use case | Interactive UIs, dashboards | In a single day batch jobs, due diligence |

| Search queries/job | ~80 | ~160 |

| Enter tokens/job | ~250K | ~900K |

| Price per job | $1 – $3 | $3 – $5 |

| Typical completion | 5 – 10 min | 10 – 20 min |

Key Options of Deep Analysis Max

- MCP Supplied Specialised Options: To hyperlink proprietary information from FactSet, S&P, PitchBook, or company-sourced materials. It might probably improve or substitute externally sourced web data with native charts and infographics produced.

- Collaborative Planning: Now you possibly can have higher management of how your analysis plan will probably be executed by way of analysis plan overview & approval previous to execution.

- Prolonged Tooling: Utilizing a number of instruments collectively, akin to search instruments, MCP, file storage, code, URL, and so on., enhances analysis and promotes compliance.

- Multi-Modal Grounding: Analyse codecs akin to PDF(s), CSV(s), picture, audio, and video facet by facet with internet data.

- Actual Time Streaming: Show all present progress, intermediate merchandise, and resultant merchandise concurrently on a real-time foundation.

How does Deep Analysis Max work?

Deep Analysis Max doesn’t function from the standard generate_content endpoint. As an alternative, it’s supposed to run solely through the Interactions API, which is a comparatively new, stateful API designed for executing long-running background work.

Whenever you submit a immediate, the next issues happen:

- You submit your analysis query to the API with the

background=Truechoice, and instantly obtain an interplay ID. Your software can then proceed with no matter work it was doing previous to that. - The AI Agent will take your query and break it down into sub-questions, decide which instruments will probably be employed, and create an entire analysis plan earlier than any supply.

- The AI Agent will carry out the search queries (sometimes round 80 for a traditional job, as much as 160 for MAX). It can analyze the outcomes of the queries completely to determine information gaps.

- That is the place MAX shines; the AI Agent will iterate by way of the analysis a number of instances. It doesn’t carry out analysis as soon as and cease. It can proceed to analysis once more, utilizing quite a lot of sources to conduct every analysis part and confirm or contradict the earlier analysis findings.

- Lastly, the AI Agent will consolidate all analysis right into a structured report that’s cited. If the info warrants, there can even be inline graphs & graphics as a part of the report.

- At that time, you’ll ballot the standing of the interplay. When an interplay signifies that it has accomplished, you’ll get your outcomes. All the course of is asynchronous, which means your software is not going to block.

Getting Began with Deep Analysis Max

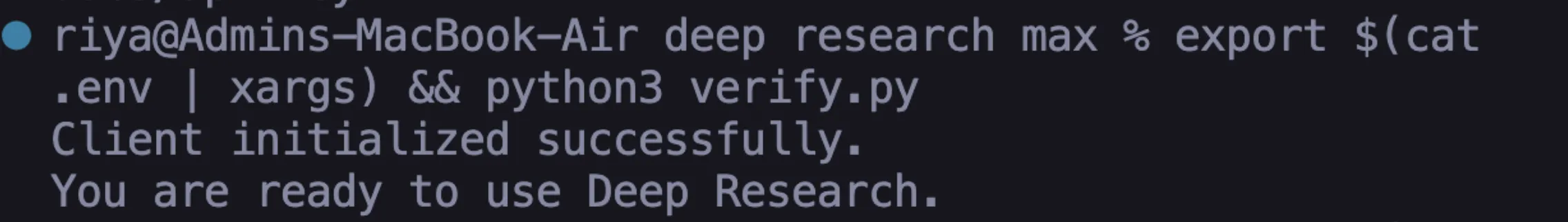

You want three gadgets to begin your analysis code work: a Gemini API key, the Python SDK, and an setting variable. This course of requires roughly 5 minutes to finish.

1. Step one requires you to accumulate your Gemini API key.

2. The second step requires you to put in the Python SDK. After you have Python put in you possibly can set up the official Google GenAI shopper library by executing this command:

pip set up google-genai The system set up course of requires customers to attend till the set up operation finishes.

3. The third step requires you to determine your setting variable. Your SDK will mechanically fetch your API key from an setting variable. Set it in your terminal session like this:

export GEMINI_API_KEY="your-api-key-here"You will need to substitute your-api-key-here with the precise key you copied from Google AI Studio. Customers ought to use the set command in Home windows as a substitute of export.

4. The fourth step requires you to examine all system elements for correct operation. Create a brand new file known as confirm.py in your venture folder. Add this code:

from google import genai

shopper = genai.Consumer()

print("Consumer initialized efficiently.")

print("You might be prepared to make use of Deep Analysis.")5. Run the command by way of your terminal:

python confirm.pyYour setting is absolutely ready whenever you succeed with each success messages.

6. There is perhaps a case of API key authentication failure, which happens as a result of your API key authentication setup is flawed. You want to affirm that your API key authentication setup is appropriate. Deep Analysis shouldn’t be obtainable on the free tier.

Process 1: Your First Analysis Process

Right here, you’ll create your first autonomous analysis job. This job teaches you the three core patterns related to submitting a immediate, polling for standing, and retrieving the ultimate outcome.

Step 1: Create the script

You’ll create a brand new file named “first_research.py” that asks your agent to conduct analysis about Synthetic Intelligence Regulation in Europe; nevertheless, be happy to alter the subject of your analysis.

import time

from google import genai

shopper = genai.Consumer()

interplay = shopper.interactions.create(

enter="Analysis the present state of AI regulation within the European Union.",

agent="deep-research-preview-04-2026",

background=True

)

print(f"Analysis began. Interplay ID: {interplay.id}") Please pay attention to the 2 vital values. agent represents the AI agent that the API will use to carry out analysis. We’re going with the usual Deep Analysis agent, as it would pull information quicker. The background=True parameter should be equipped as a result of if it’s not obtainable, then the request will fail. Analysis all the time takes place asynchronously through Deep Analysis.

Step 2: Construct the polling loop

The API will shortly return your interplay ID. Your job is to examine again periodically till the analysis has been accomplished. Add your polling code under your creation code.

whereas True:

interplay = shopper.interactions.get(interplay.id)

if interplay.standing == "accomplished":

print("n--- Analysis Full ---n")

print(interplay.outputs[-1].textual content)

break

elif interplay.standing == "failed":

print(f"Analysis failed: {interplay.error}")

break

print("Nonetheless researching...", flush=True)

time.sleep(10)It checks its standing each 10 seconds and prints your entire report after completion. If one thing fails, it would print “Error”.

Step 3: Run Script

python first_research.pyYou need to see the phrase “Nonetheless researching…” a number of instances in your Terminal window. Most jobs end between 5 and quarter-hour, so when all jobs are completed, you’ll obtain a totally assembled analysis report that’s absolutely cited.

Step 4: Assessment the Output

Spend time trying by way of the report and be aware how the Agent organised the analysis based mostly on logical sections. After every declare, you will note a quotation to the supply utilized by the agent to finish that part of the report. You simply completed what would have taken tens of hours for a human to learn and write.

Process 2: Producing Native Visualizations

Utilizing Deep Analysis Max, you possibly can produce visualizations (charts) instantly out of your information, with out the necessity to use third-party libraries, utilizing the agent to acquire visible experiences mechanically.

Step 1: Generate all charts within the report:

import time

from google import genai

shopper = genai.Consumer()

immediate = """

Analysis the highest 10 programming languages by job demand in 2026.

Embody in your report:

- A bar chart evaluating job postings throughout languages

- A pattern line displaying development over the previous 3 years

- A comparability desk with wage ranges

Generate all charts natively inline.

"""

interplay = shopper.interactions.create(

enter=immediate,

agent="deep-research-max-preview-04-2026",

background=True

)

print(f"Visible analysis began. ID: {interplay.id}")Create visual_research.py with a immediate to request charts – the immediate ought to comprise the phrase “generate all charts natively inline” to request that the agent create an HTML or Nano banana visualization that’s embedded instantly within the report.

Step 2: Ballot for outcomes and save as an HTML file

whereas True:

interplay = shopper.interactions.get(interplay.id)

if interplay.standing == "accomplished":

with open("visual_report.html", "w") as f:

f.write(interplay.outputs[-1].textual content)

print("Saved to visual_report.html")

break

elif interplay.standing == "failed":

print(f"Failed: {interplay.error}")

break

time.sleep(10)Step 3: Open visual_report.html in an internet browser

The agent created the charts instantly on the web page: no Matplotlib, no Plotly, no JavaScript charting libraries, the agent created all of those as a part of the report output.

That is extraordinarily useful for automated report pipelines. The report could be shared instantly with none further post-processing.

Manufacturing Greatest Practices

When transitioning from lab scripts to manufacturing code, just a few adjustments are needed:

- As an alternative of polling your predominant server utilizing a while-loop, use a job queue structure. Settle for the request by way of Cloud Run, then retailer the interplay ID in a database and examine the outcomes utilizing Cloud Scheduler or a cron job. This manner, you retain your server responsive when experiencing excessive visitors.

- Persist interplay IDs and occasion IDs. Misplaced connections are frequent with 20-minute analysis duties. At all times persist the

interaction_idandlast_event_idfrom streaming. By reconnecting withshopper.interactions.get()utilizing the persevered ids, it is possible for you to to renew the place you left off. - Write particular prompts to regulate prices. Common prompts result in a broad search, which would require extra tokens and time than utilizing a particular and well-defined immediate.

- Cache each time attainable. A Deep Analysis Max report can price wherever from $3-$5, so if there are plenty of related questions amongst customers, cache these outcomes. You’ll be spending little or no when it comes to working prices to serve from the cache.

- At all times confirm citations. The agent supplies its citations, however it’s a studying of the open internet. For vital enterprise selections, it’s advisable to spot-check an important claims with authentic sources; due to this fact, “belief however confirm.”

Is Deep Analysis Max price utilizing?

Deep Analysis Max shouldn’t be like the usual AI chatbots that we’re accustomed to. With Deep Analysis Max, you present the machine with a job, go away it alone, then examine again later for an entire report (as you’d do with a person). Now not do you should present a number of prompts to the AI to obtain the proper reply.

Having all the pieces in a single place can be useful. Deep Analysis Max can look issues up for you; use your information (if obtainable) and create charts with none further effort in your half. I’d encourage you to have Deep Analysis Max do one thing small for you, so you will note how properly it really works earlier than rising the quantity of labor you utilize it for.

Regularly Requested Questions

A. It’s an autonomous AI agent that plans, searches, analyzes, and generates absolutely cited experiences.

A. Use it for deeper, longer, and extra complete analysis duties.

A. Sure, it creates native inline charts and visible experiences with out exterior libraries.

Login to proceed studying and revel in expert-curated content material.