Constructing a RAG system simply obtained a lot simpler. Google’s File Search software for the Gemini API now handles the heavy lifting of connecting LLMs to your information. Chunking, embedding, indexing are all managed for you. And with the most recent replace, it’s gone multimodal. Now you can search by means of each textual content and pictures in a single pipeline, with customized metadata filtering and page-level citations in-built. On this information, we’ll stroll by means of how File Search works and implement it with sensible examples.

What File Search Does?

File Search helps Gemini entry and use data out of your information sources like studies, paperwork, analysis papers, code, and personal information bases.

Whenever you add a file, Gemini breaks it into smaller items known as “chunks” and creates embeddings for them. These embeddings are numerical representations that seize the which means of the content material, serving to Gemini perceive the context. They’re then saved in a File Search Retailer for simple retrieval.

Whenever you ask a query, Gemini searches the saved embeddings for essentially the most related chunks and makes use of them as context to generate solutions. That is the essence of Retrieval Augmented Era (RAG).

Gemini File Search goes past simply textual content. It additionally helps multimodal RAG, permitting textual content and pictures to be listed and searched collectively. This implies you may retrieve data from PDFs, photos, charts, screenshots, and extra utilizing pure language queries.

For multimodal duties, Gemini makes use of gemini-embedding-2 for picture and multimodal embeddings, whereas gemini-embedding-001 handles textual content embeddings. Be aware that audio and video codecs are usually not supported but.

Additionally Learn: Constructing an LLM Mannequin utilizing Google Gemini API

How File Search Works?

File Search is powered by semantic vector search. As an alternative of matching on phrases immediately, it’ll discover data primarily based on which means and context. Which means File Search can discover you related data even when the wording of the question is completely different.

Time wanted: 4 minutes

Right here’s the way it works step-by-step:

- Add a file

The file will probably be damaged up into smaller sections known as “chunks.”

- Embedding era

Every chunk could be remodeled right into a numerical vector that represents the which means of that chunk.

- Storage

The embeddings will probably be saved in a File Search Retailer, an embedded retailer designed particularly for retrieval.

- Question

When a consumer poses a query, File Search will remodel that query into an embedding.

- Retrieval

The retrieval step will examine the query embedding with the saved embeddings and discover which chunks are most related (if any).

- Grounding

Related chunks are added to the immediate to the Gemini mannequin in order that the reply is grounded within the factual information from the paperwork.

This complete course of is dealt with beneath the Gemini API. The developer doesn’t need to handle any extra infrastructure or databases.

Setup Necessities

To make the most of the File Search Software, builders will want a number of basic elements. They might want to have Python 3.9 or newer, the google-genai consumer library, and a legitimate Gemini API key that has entry to both gemini-2.5-pro or gemini-2.5-flash.

Set up the consumer library by working:

pip set up google-genai -U Then, set your atmosphere variable for the API key:

export GOOGLE_API_KEY="your_api_key_here"Making a File Search Retailer

A File Search Retailer is the place Gemini shops and indexes embeddings created out of your uploaded recordsdata. As soon as a file is uploaded and listed, the listed information stays out there for retrieval till you manually delete it.

For text-only RAG, you may create a standard File Search Retailer. For multimodal RAG, the place you need to add and search each paperwork and pictures, create the shop with fashions/gemini-embedding-2.

from google import genai

from google.genai import sorts

import time

import os

from pathlib import Path

# Don't hardcode your API key within the pocket book.

# Set it as an atmosphere variable as an alternative.

os.environ["GOOGLE_API_KEY"] = "enter_your_api_key"

consumer = genai.Consumer(api_key=os.environ["GOOGLE_API_KEY"])

file_search_store = consumer.file_search_stores.create(

config={

"display_name": "my_multimodal_rag_store",

"embedding_model": "fashions/gemini-embedding-2"

}

)

print("File Search Retailer created:", file_search_store.identify)Output:

This replace is essential as a result of the official docs present embedding_model: fashions/gemini-embedding-2 whereas making a File Search Retailer for multimodal utilization.

Add a File

After the File Search Retailer is created, you may add recordsdata to it. When a file is uploaded, Gemini File Search mechanically chunks the content material, generates embeddings, and indexes it for quick retrieval.

For text-based RAG, File Search helps paperwork similar to PDF, DOCX, TXT, JSON, and programming recordsdata like .py and .js.

For multimodal RAG, File Search additionally helps picture recordsdata. This implies you may add paperwork and pictures into the identical File Search Retailer and ask questions that require each textual and visible context. For instance, you may add a analysis paper, a product picture, and a chart, then ask Gemini to summarize the paper and clarify the associated visible data.

For picture uploads, be certain that the File Search Retailer is created with fashions/gemini-embedding-2. In response to the official documentation, supported picture codecs are PNG and JPEG. Picture recordsdata should be at most 4K x 4K pixels, and a request can embody a most of 6 photos.

Add a Doc File

# Add and import a doc into the File Search Retailer.

# The show identify will probably be seen in citations.

operation = consumer.file_search_stores.upload_to_file_search_store(

file="/content material/Paper2Agent.pdf",

file_search_store_name=file_search_store.identify,

config={

"display_name": "Paper2Agent.pdf",

}

)

# Wait till import is full

whereas not operation.achieved:

time.sleep(5)

operation = consumer.operations.get(operation)

print("Doc efficiently uploaded and listed.")Output:

After this step, the doc is chunked, embedded, listed, and prepared for retrieval.

Add an Picture File for Multimodal Retrieval

You may also add a picture file to the identical File Search Retailer. That is helpful when your software must retrieve data from product photos, screenshots, charts, diagrams, or different visible content material.

# Add a picture file for multimodal retrieval.

operation = consumer.file_search_stores.upload_to_file_search_store(

file="/content material/product_image.jpg",

file_search_store_name=file_search_store.identify,

config={

"display_name": "product_image.jpg",

}

)

# Wait till import is full

whereas not operation.achieved:

time.sleep(5)

operation = consumer.operations.get(operation)

print("Picture efficiently uploaded and listed."Output:

As soon as the picture is listed, Gemini can retrieve it throughout File Search when the consumer’s question is related to the picture.

Add A number of Paperwork and Photos

In real-world purposes, you could need to add a number of recordsdata directly. These recordsdata can embody each textual content paperwork and pictures.

from pathlib import Path

import time

files_to_upload = [

"/content/Paper2Agent.pdf",

"/content/product_image.jpg",

"/content/sales_chart.png"

]

for file_path in files_to_upload:

operation = consumer.file_search_stores.upload_to_file_search_store(

file=file_path,

file_search_store_name=file_search_store.identify,

config={

"display_name": Path(file_path).identify,

}

)

whereas not operation.achieved:

time.sleep(5)

operation = consumer.operations.get(operation)

print(f"Uploaded and listed: {file_path}")Output:

After the add step, all recordsdata are chunked, embedded, listed, and prepared for retrieval. If the File Search Retailer incorporates each paperwork and pictures, Gemini can retrieve related context from each sources whereas answering consumer questions.

Ask Questions Concerning the File

As soon as your recordsdata are listed, Gemini can reply questions utilizing the uploaded paperwork and pictures as context. It searches the File Search Retailer, retrieves essentially the most related chunks, and makes use of them to generate a grounded response.

For a text-only use case, you may ask a query in regards to the uploaded PDF:

response = consumer.fashions.generate_content(

mannequin="gemini-3-flash-preview",

contents="Summarize what's there within the analysis paper.",

config=sorts.GenerateContentConfig(

instruments=[

types.Tool(

file_search=types.FileSearch(

file_search_store_names=[file_search_store.name]

)

)

]

)

)

print("Mannequin Response:n")

print(response.textual content)Output:

Right here, File Search is being utilized as a software inside generate_content(). The mannequin first searches your saved embeddings, pulls essentially the most related sections, after which generates a solution primarily based on that context.

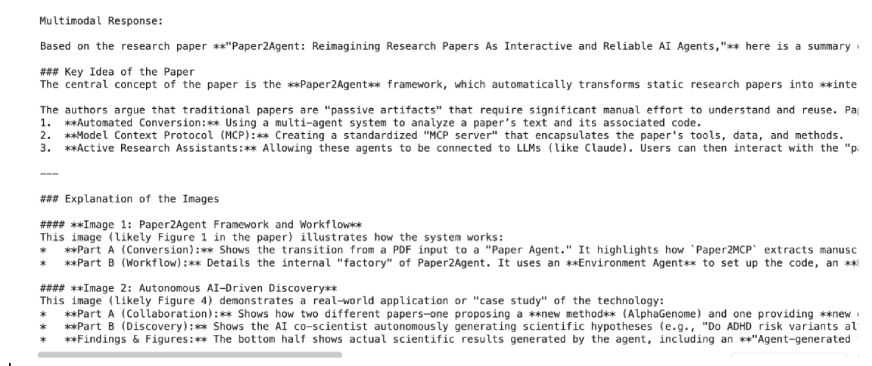

For a multimodal use case, you may ask a query that makes use of each the doc and the picture:

response = consumer.fashions.generate_content(

mannequin="gemini-3-flash-preview",

contents="""

Based mostly on the uploaded analysis paper, and the pictures,

summarize the important thing concept from the paper and clarify what the pictures exhibits.

""",

config=sorts.GenerateContentConfig(

instruments=[

types.Tool(

file_search=types.FileSearch(

file_search_store_names=[file_search_store.name]

)

)

]

)

)

print("Multimodal Response:n")

print(response.textual content)Output:

Right here, File Search is used as a software inside generate_content(). The mannequin searches the saved embeddings, retrieves essentially the most related textual content or picture context, after which generates a solution primarily based on that retrieved data.

Customise Chunking

By default, File Search decides the way to break up recordsdata into chunks, however you may management this habits for higher search precision.

operation = consumer.file_search_stores.upload_to_file_search_store(

file_search_store_name=file_search_store.identify,

file="path/to/your/file.txt",

config={

'chunking_config': {

'white_space_config': {

'max_tokens_per_chunk': 200,

'max_overlap_tokens': 20

}

}

}

) This configuration units every chunk to 200 tokens with 20 overlapping tokens for smoother context continuity. Shorter chunks give finer search outcomes, whereas bigger ones retain extra general which means helpful for analysis papers and code recordsdata.

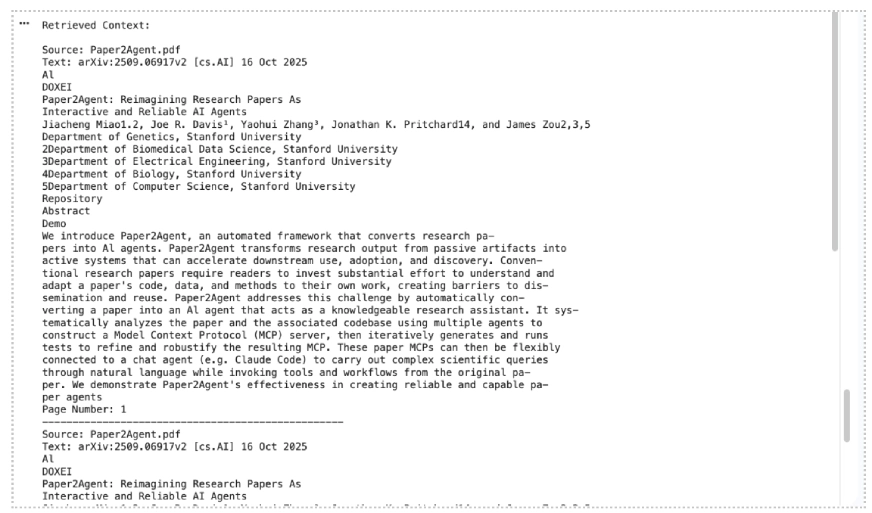

Present Citations for Retrieved Context

You may also print quotation data to examine which recordsdata or chunks Gemini used whereas producing the response. The official docs say quotation data is out there by means of grounding_metadata, and picture references could embody media quotation particulars.

grounding_metadata = response.candidates[0].grounding_metadata

print("nRetrieved Context:n")

if grounding_metadata and grounding_metadata.grounding_chunks:

for chunk in grounding_metadata.grounding_chunks:

context = chunk.retrieved_context

if context:

print("Supply:", getattr(context, "title", "Unknown"))

print("Textual content:", getattr(context, "textual content", "No textual content out there"))

if getattr(context, "page_number", None):

print("Web page Quantity:", context.page_number)

if getattr(context, "media_id", None):

print("Media ID:", context.media_id)

print("-" * 50)

else:

print("No grounding metadata discovered.")Output:

This makes the hands-on part stronger as a result of readers can see not solely the reply, but in addition the supply context utilized by Gemini.

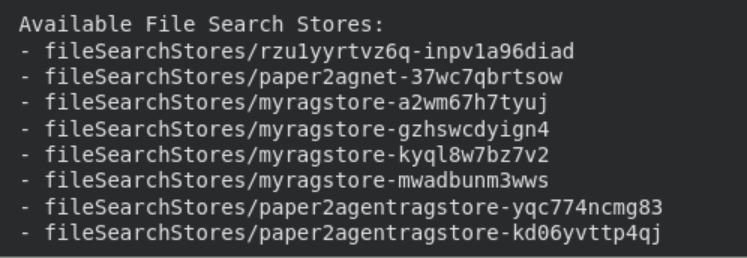

Handle Your File Search Shops

You’ll be able to simply record, view, and delete file search shops utilizing the API.

print("n Obtainable File Search Shops:")

for s in consumer.file_search_stores.record():

print(" -", s.identify)

# Get detailed information

particulars = consumer.file_search_stores.get(identify=file_search_store.identify)

print("n Retailer Particulars:n", particulars

# Delete the shop (non-compulsory cleanup)

consumer.file_search_stores.delete(identify=file_search_store.identify, config={'power': True})

print("File Search Retailer deleted.")

These administration choices assist hold your atmosphere organized. Listed information stays saved till manually deleted, whereas recordsdata uploaded by means of the non permanent Information API are mechanically eliminated after 48 hours.

Additionally Learn: 12 Issues You Can Do with the Free Gemini API

File Search Help and Limits

File Search is out there with the next Gemini fashions: Gemini 3.1 Professional Preview, Gemini 3.1 Flash-Lite Preview, Gemini 3 Flash Preview, Gemini 2.5 Professional, and Gemini 2.5 Flash-Lite.

Gemini 3 fashions permit you to mix File Search with customized instruments through perform calling. Nonetheless, File Search isn’t but supported within the Stay API and can’t be used with sure built-in instruments like Grounding with Google Search or URL Context.

File Search helps a variety of file codecs, together with PDFs, Phrase paperwork, spreadsheets, displays, JSON, CSV, HTML, XML, Markdown, YAML, code recordsdata, ZIP recordsdata, and Jupyter notebooks. For multimodal RAG, it additionally helps PNG and JPEG photos when the shop is created with fashions/gemini-embedding-2.

File Dimension and Storage Limits

| Person Tier | File Dimension Restrict | Retailer Capability Restrict |

|---|---|---|

| Free | 100 MB per file | 1 GB |

| Tier 1 | 100 MB per file | 10 GB |

| Tier 2 | 100 MB per file | 100 GB |

| Tier 3 | 100 MB per file | 1 TB |

Really helpful: Maintain every retailer beneath 20 GB for higher retrieval efficiency and decrease latency.

Relating to pricing, embeddings are charged at indexing time. Storage and query-time embeddings are free, and retrieved doc tokens are billed as regular context tokens.

Additionally Learn: The way to Entry and Use the Gemini API?

Conclusion

File Search takes the infrastructure work out of constructing RAG techniques. No exterior vector databases, no customized embedding pipelines. Simply add your recordsdata and begin querying. With the brand new multimodal help, now you can search throughout paperwork and pictures collectively. Metadata filtering helps you scope outcomes to precisely what’s related, and page-level citations make each reply traceable again to its supply. Whether or not you’re prototyping or constructing for manufacturing, File Search provides you a stable, managed basis to construct on. Get began at Google AI Studio or by means of the Gemini API docs linked within the article.

Login to proceed studying and revel in expert-curated content material.