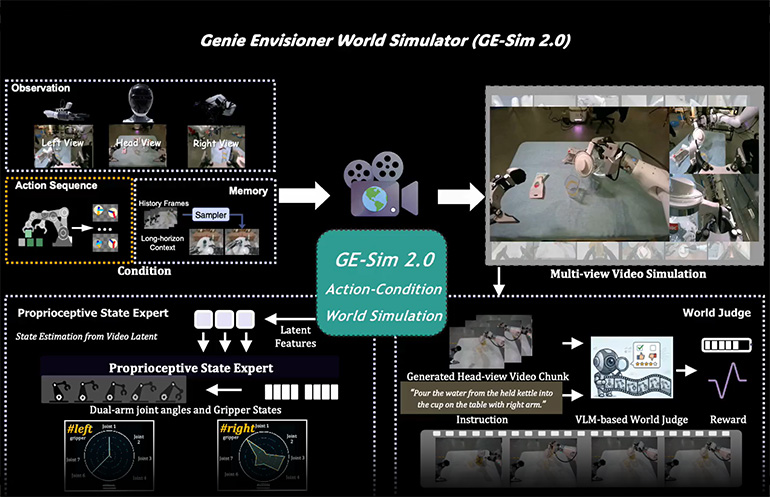

Genie Envisioner World Simulator takes video knowledge to assist management robots. 2.0 Supply: AGIBOT

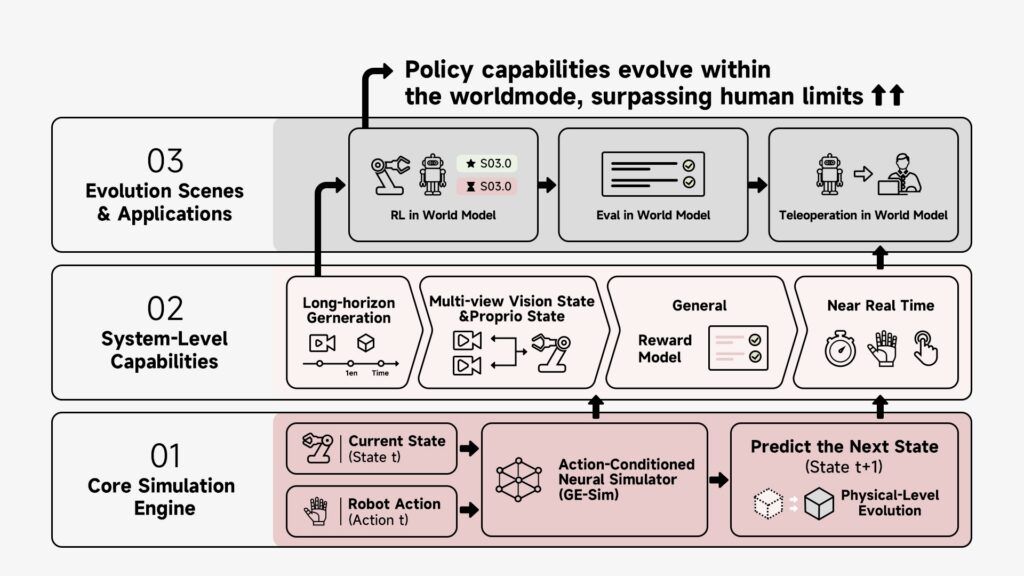

AGIBOT right now introduced the discharge of Genie Envisioner 2.0, or GE 2-Sim, which it stated marked a major step ahead within the evolution of world fashions — from world motion fashions to completely interactive “world simulators.”

The brand new system introduces what the corporate described as a “bodily evolution engine” for embodied AI. It’s a model-based setting the place robots might be skilled, evaluated, and optimized at scale, with out relying solely on pricey real-world trial and error.

From understanding the world to studying inside it

In 2025, AGIBOT introduced what it claimed was the business’s first action-driven world mannequin, Genie Envisioner. The open-source platform enabled robots to grasp the world via built-in modeling of imaginative and prescient, language, and motion, stated the Shanghai-based firm.

With Genie Envisioner 2.0, AGIBOT stated it has shifted the paradigm additional, from enabling robots to grasp the world after which to enabling them to be taught inside a world generated by fashions.

The corporate asserted that this transition displays a broader shift in embodied AI — from representing the world to simulating the world itself. As world fashions evolve into steady, high-fidelity environments that reply to actions in bodily constant methods, they unlock the flexibility to coach robots at scale in artificial environments.

AGIBOT stated it believes GE 2-Sim marks a vital inflection level towards reaching a real scaling legislation in embodied intelligence.

World motion fashions can present state evolution. Click on right here to enlarge. Supply: AGIBOT

From world motion fashions to world simulators

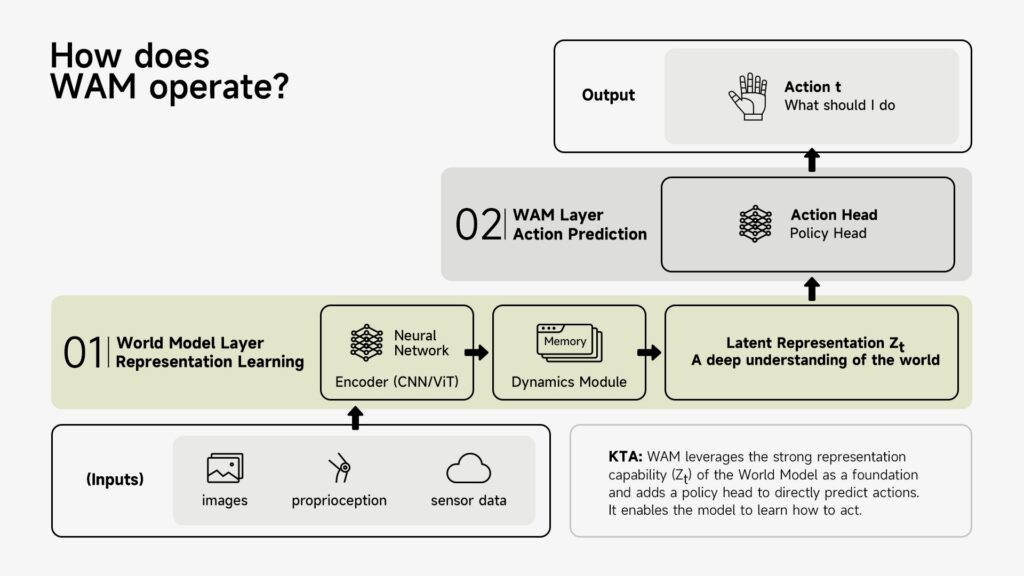

On the core of this evolution is AGIBOT’s continued growth of the world motion mannequin (WAM) framework, which extends conventional world fashions by explicitly incorporating actions as a first-class variable.

Quite than modeling solely state, WAM captures the total loop of:

- State → Motion → State Evolution

This permits world fashions to function a foundational layer for each coverage studying and motion technology. Constructing on this basis, AGIBOT has progressively developed a sequence of methods:

- EnerVerse: Extends embodied environments right into a computable 4D world mannequin

- Genie Envisioner Act (GE-Act): Bridges world illustration and motion trajectory technology

- Act2Goal: Permits long-horizon, goal-driven management

Whereas these advances allowed world fashions to assist coverage studying, real-world deployment uncovered key limitations: excessive reliance on bodily environments, pricey analysis, and knowledge scalability constraints.

This led to a elementary realization. The following breakthrough lies not in stronger illustration, however in remodeling world fashions into totally purposeful simulators.

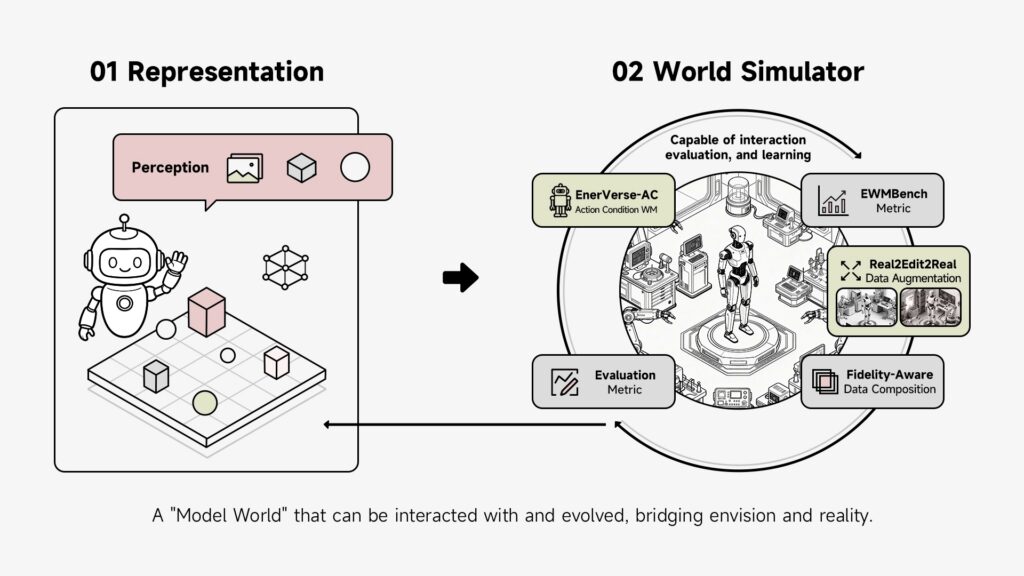

Making the world runnable: Towards interactive simulation

To allow this transition, AGIBOT introduces a set of latest capabilities that push world fashions towards interactive simulation:

- EnerVerse-AC: Introduces action-conditioned world modeling for future prediction

- Genie Envisioner Sim (GE-Sim): A neural simulator for closed-loop coverage analysis

- EWMBench: A complete benchmark evaluating simulation constancy, motion correctness, and semantic alignment

On the identical time, AGIBOT establishes a brand new knowledge and coaching paradigm:

- Real2Edit2Real: Actual-world knowledge turns into editable and extensible, considerably rising scale and variety

- Constancy-Conscious Knowledge Composition: Combines actual and generated knowledge to steadiness realism and generalization

Collectively, these developments rework world fashions from illustration methods into environment-level infrastructure.

A world simulator could make simulation extra interactive and productive. Click on right here to enlarge. Supply: AGIBOT

Genie Envisioner 2.0: A ‘bodily evolution engine’

Genie Envisioner 2.0 represents the end result of this evolution—a system that’s not simply generative, however operational. Key capabilities embody:

Motion-driven world dynamics

The system responds on to robotic actions, producing high-fidelity environmental adjustments that comply with bodily and semantic constraints. The world turns into a course of formed by interplay, slightly than a static illustration.

Lengthy-horizon temporal modeling

Helps minute-level steady simulation, enabling steady technology of full process sequences slightly than fragmented clips.

Embodied spatial consistency

Unifies multi-view notion, cross-view 3D consistency, and robotic proprioception right into a single illustration—remodeling notion from photos into a totally interactive embodied world.

Constructed-in analysis and reward modeling

A local basic reward mannequin permits self-evaluation and optimization primarily based on textual suggestions, supporting reinforcement studying on the earth mannequin with out human-designed rewards.

Towards real-time interplay

With improved inference effectivity, GE 2-Sim approaches real-time operation, enabling:

- Eval in World Mannequin

- RL in World Mannequin

- Teleoperation in World Mannequin

This marks the transition of world fashions from offline instruments to interactive system environments.

The core simulation engine can present knowledge to feed AI. Click on right here to enlarge. Supply: AGIBOT

A paradigm shift: When fashions grow to be worlds

As these capabilities converge, embodied AI is present process a elementary transformation, from “utilizing fashions to grasp the world” to “studying and making selections inside model-generated worlds.”

On one aspect, the mixing of WAM and vision-language-action (VLA) fashions permits a shift from reactive management to generative, predictive decision-making.

On the opposite, world simulators enable robots to discover, iterate, and optimize at scale—not restricted by real-world knowledge availability, however by the constancy of simulation itself.

When these two trajectories converge, robots transfer past replicating human demonstrations to repeatedly exploring, adapting, and evolving inside model-generated environments.

Towards a brand new basis for embodied intelligence

AGIBOT envisions world fashions evolving from instruments for understanding, to platforms for studying, and in the end to infrastructure that drives steady evolution.

When fashions grow to be worlds, actuality is not the one coaching floor. When worlds might be constructed, studying might be scaled. And when evolution occurs inside fashions, the boundaries of embodied AI might be basically redefined.

Editor’s observe: On the 2026 Robotics Summit & Expo on Could 27 and 28 in Boston, there will probably be periods on embodied and bodily AI. Registration is now open.

The put up AGIBOT unveils Genie Envisioner 2.0 to advance world fashions into scalable simulators for embodied AI appeared first on The Robotic Report.