Synthetic intelligence is quickly evolving. New fashions emerge practically daily, with every one trying to be the very best. On this sea of comparable fashions, we see one thing new from time to time. One among such fashions is the brand new Mistral Small 4. It’s an modern AI mannequin that’s not solely going to be one other alternative within the plethora of AI fashions, but additionally makes an attempt to be the mannequin of your alternative. No extra multi-modeling on hatting, coding, and multifaceted pondering. Mistral Small 4 packages them right into a single formidable and efficient mannequin.

It isn’t nearly comfort. Mistral Small 4 applies the Combination-of-Specialists (MoE) intelligent design to provide the efficiency of a 119-billion-parameter mannequin, however runs at a fraction of the ability required to execute anybody activity. This suggests that it’s fast, low-cost, and even picture discerning. On this information, we’ll dissect what makes Mistral Small 4 tick, the way it compares to the competitors, and we may also take you thru some real-life conditions through which you need to use it.

What’s New With Mistral Small 4?

Mistral Small 4 is exclusive as a consequence of the truth that it incorporates three completely different capabilities into one single and easy mannequin. Beforehand, you may have had one AI to have a dialog, one other one to have interaction in analytical work, and a 3rd one to put in writing code. Mistral Small 4 is made to deal with all that effortlessly. It may well additionally function a basic chat assistant, a coding professional, and a reasoning engine, everywhere in the identical endpoint.

Its Combination-of-Specialists (MoE) structure is the key of its effectivity – a bunch of 128 consultants. One other benefit of the mannequin is that it doesn’t require all of them engaged on all the issues; as a substitute, the mannequin is sensible sufficient to choose the highest 4 to work on the given activity. This suggests that the overall variety of parameters within the mannequin is big (at 119 billion), however just a few, between 6 and 6.5 billion, shall be activated by a specific request. This makes it fast and lowers the price of operation.

The principle traits are the next:

- Multimodal Enter: It is aware of each photos and textual content, owing to its Pixtral imaginative and prescient aspect.

- Lengthy Context Window: This has the power to deal with as much as 256,000 tokens of data at a time therefore it’s relevant within the evaluation of lengthy paperwork.

- Open and Accessible: The weights of the mannequin are below Apache 2.0 license, which allows industrial use. It’s open-source and works through APIs and associate platforms.

- Efficiency Optimised: Mistral boasts of a 40 p.c lower within the period of time taken to finish and three instances extra requests per second than its predecessor.

Below the Hood: Structure and Specs

Mistral Small 4 is a mix of a textual content decoder and a imaginative and prescient encoder. On giving a picture, the Pixtral imaginative and prescient system interprets the picture and passes the knowledge over to the textual content mannequin that then produces a response. Such a design allows it to mix with visible info and textual prompts.

The next are a few of the architectural particulars:

- Decoder Stack: 36 transformer layers, hidden measurement of 4096, and 32 consideration heads.

- MoE Particulars: 128 consultants, 4 of that are activated per token, with a shared professional part in order that issues are constant.

- Imaginative and prescient Element: The Pixtral imaginative and prescient mannequin accommodates 24 layers and pictures with a patch measurement of 14.

- Vocabulary: The mannequin has a Tekken tokenizer with a wealthy vocabulary of 131,072 tokens to allow help for a couple of language and complex directions.

Though the variety of energetic parameters is low, the general measurement of the mannequin determines the reminiscence necessities. The parameter mannequin 119B has a big VRAM requirement; the 4-bit quantised model alone consumes round 60 GB, and a 16-bit model consumes virtually 240 GB. This doesn’t embrace the reminiscence required within the KV cache of long-context duties.

Benchmarks and Analysis

Mistral Small 4 shouldn’t be merely a wise design; it has figures that may help its efficiency arguments. Mistral focuses on the standard and effectivity, the place the mannequin is able to delivering high-quality solutions in a really slim trend. The ensuing low latency and low value in observe could be instantly associated to this emphasis on shorter outputs.

Effectivity: Excessive Scores with Much less Speak

On numerous benchmarks, a constant pattern exists: Mistral Small 4 contributes to and even beats the state-of-the-art fashions utilizing rather a lot fewer phrases to take action.

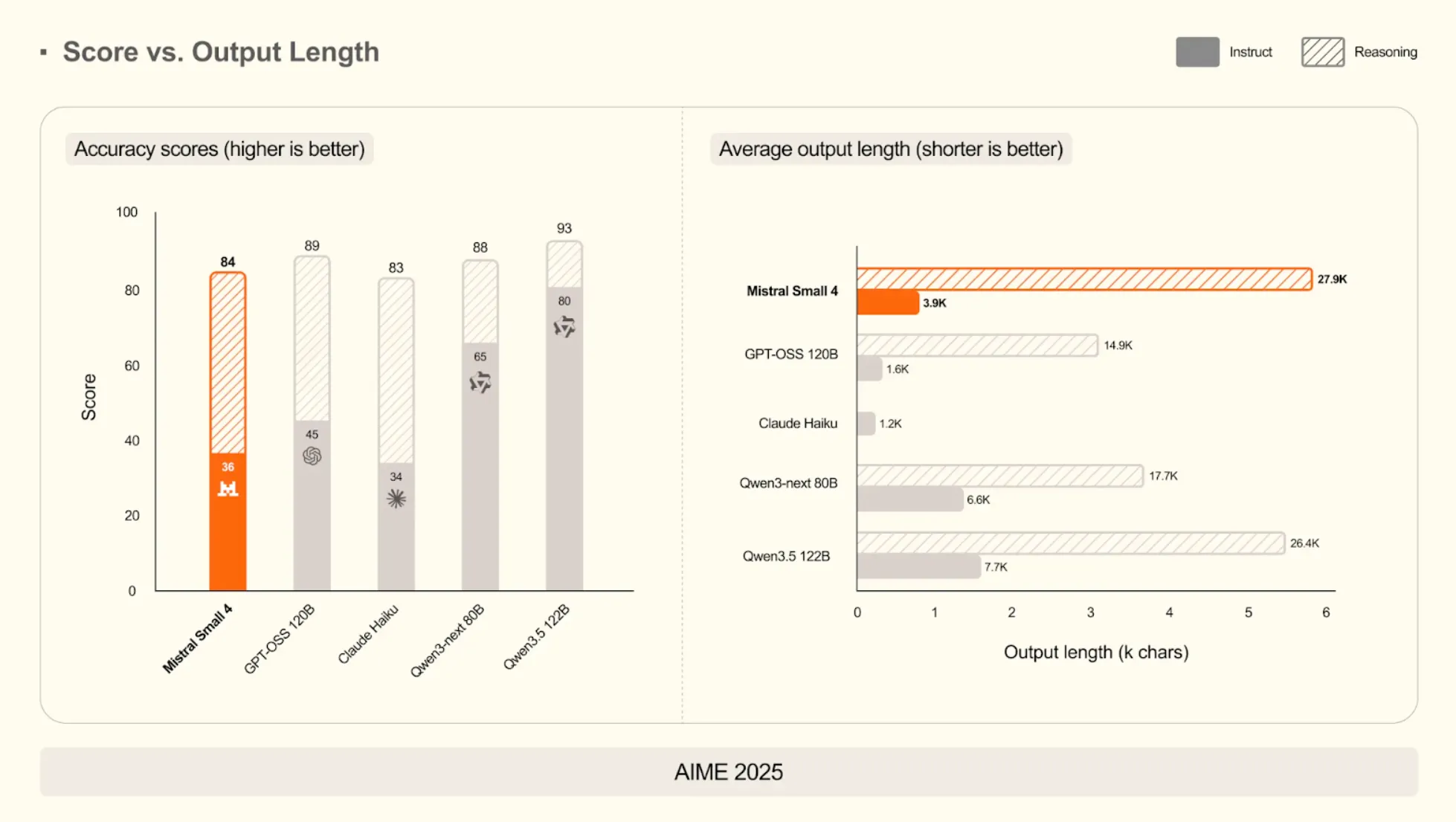

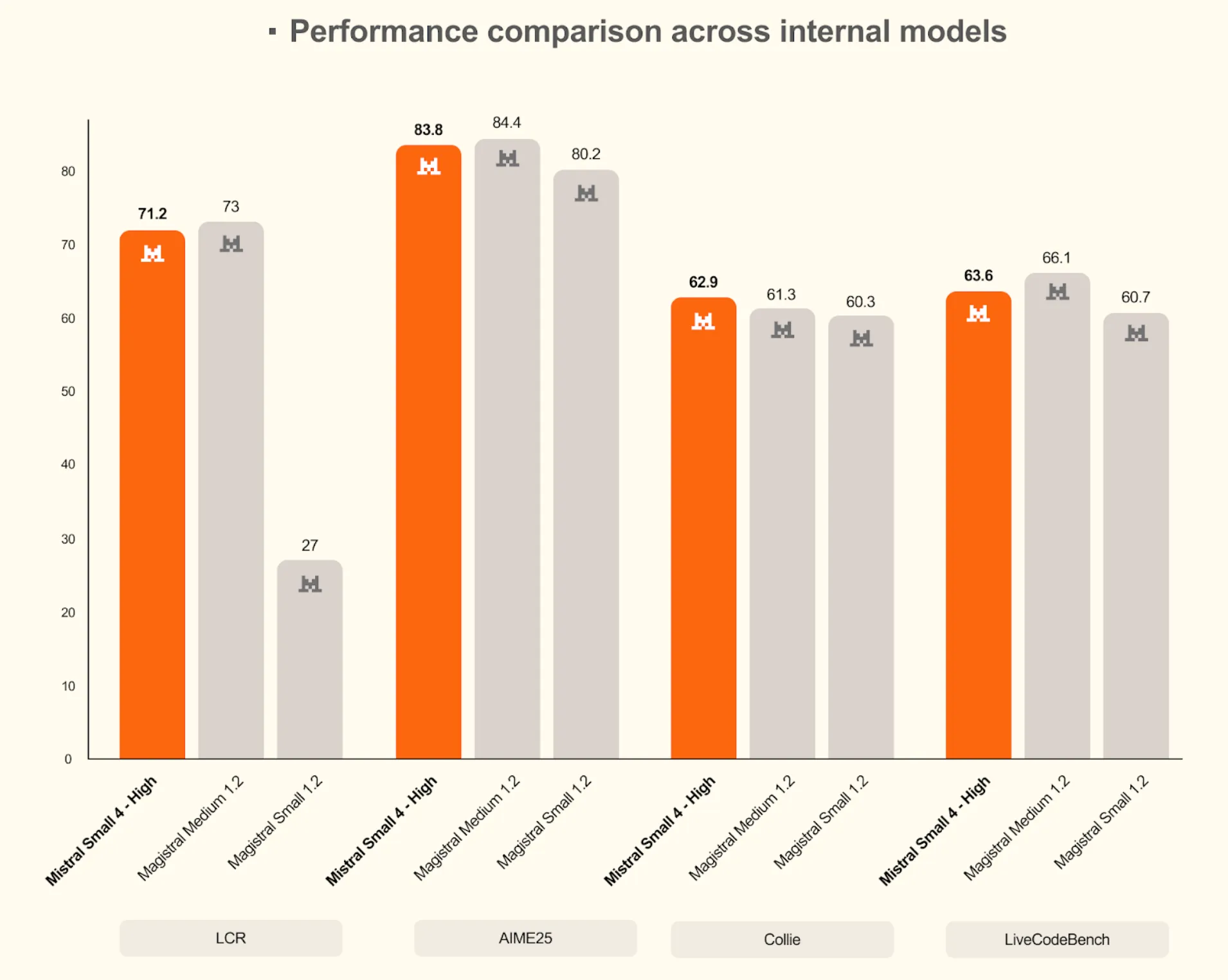

On Mathematical Reasoning (AIME 2025)

The rating of the mannequin in its reasoning mode is 93, which is the same as a lot greater Qwen3.5 122B. However its common size of output in instruct mode is simply 3,900 characters, which is a fraction of the just about 15,000 characters of GPT-OSS 120B.

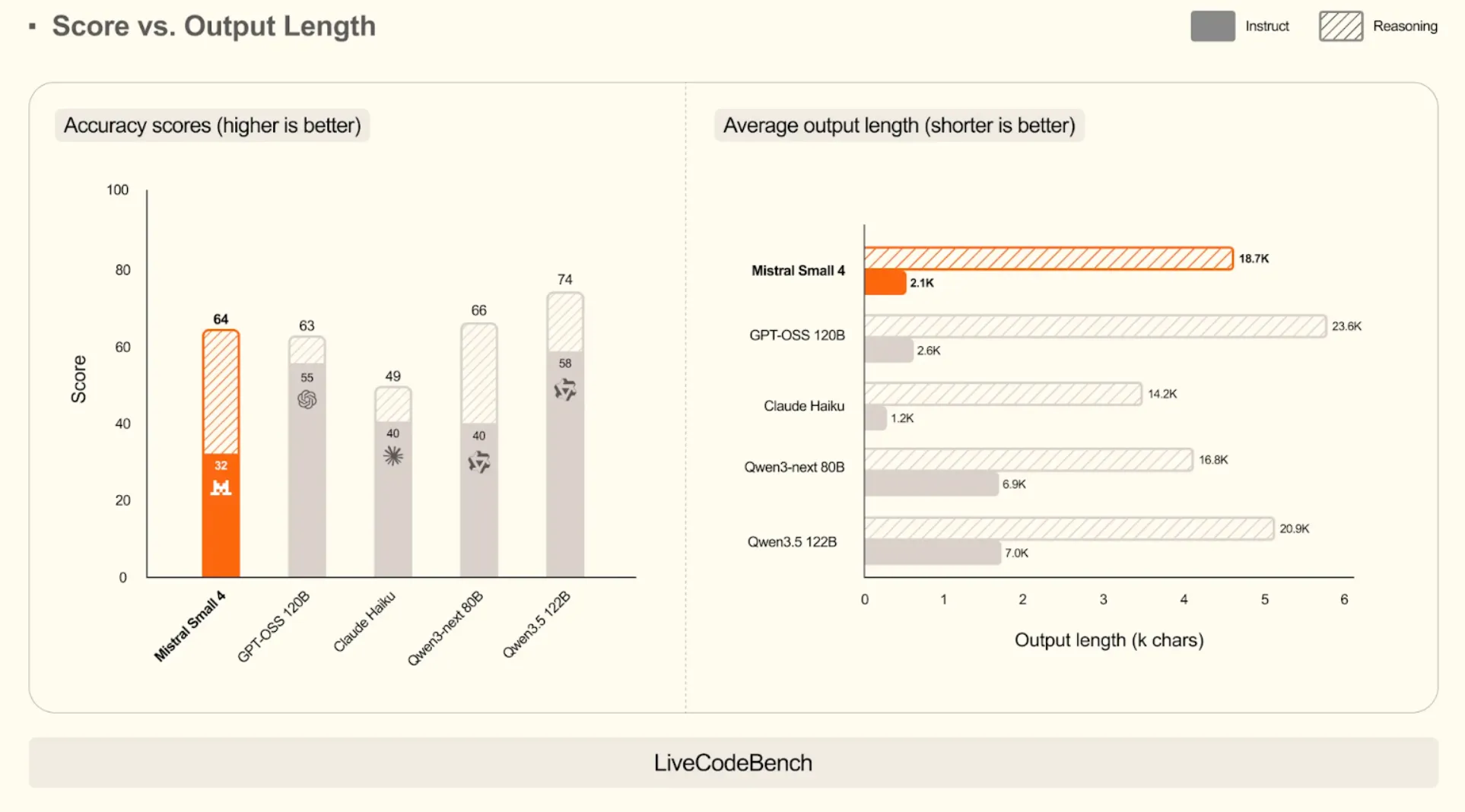

On Coding Duties (LiveCodeBench)

The mannequin has a aggressive rating of 64, marginally beating GPT-OSS 120B (63). It reveals effectivity: it produces code that’s greater than 10 instances shorter (2.1k characters vs. 23.6k characters), because it is ready to produce right code with out the pointless wordiness.

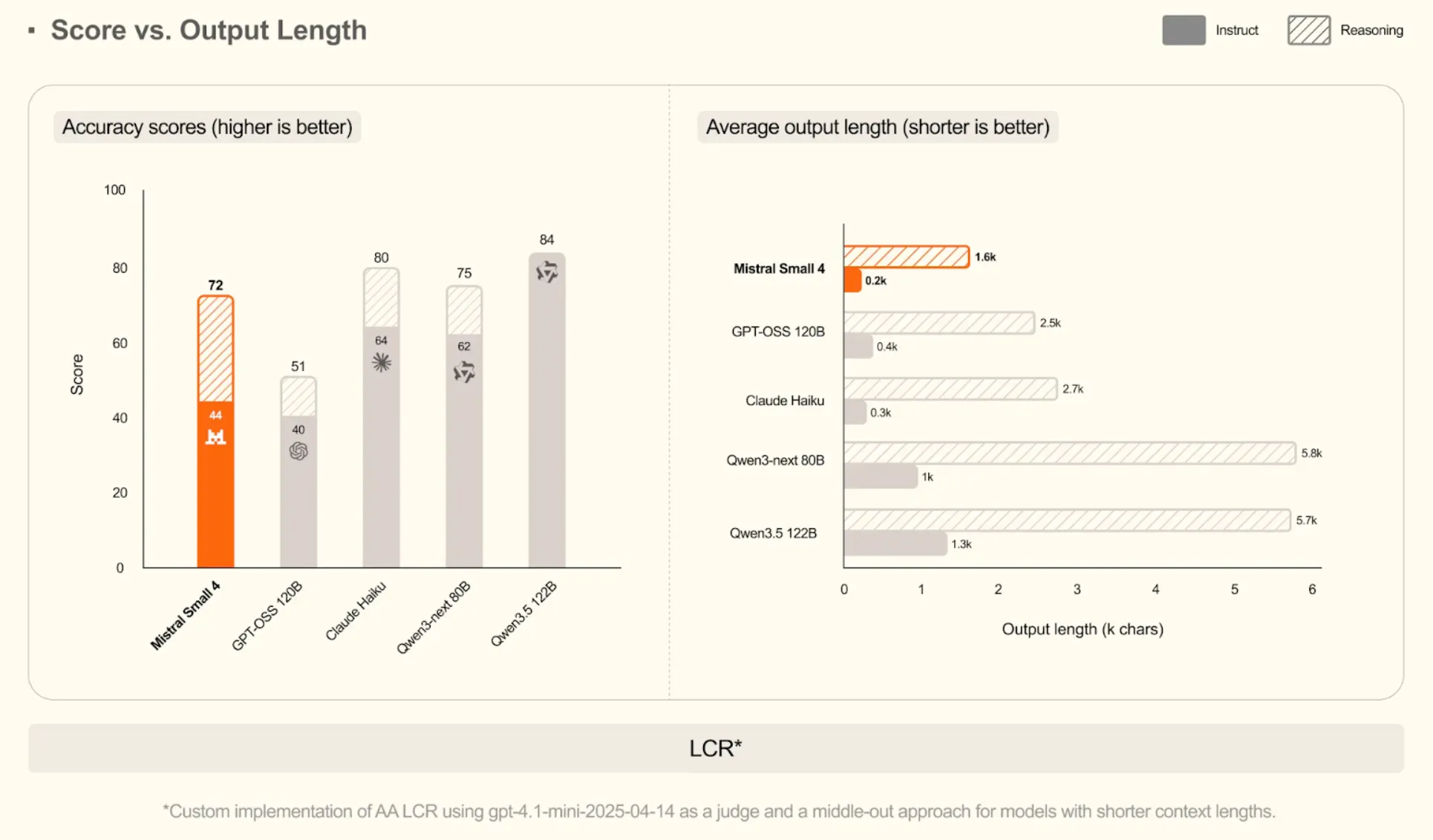

On Lengthy-Context Reasoning (LCR)

Mistral Small 4 will get a excessive score of 72. It does this at a particularly brief output of solely 200 characters in instruct mode. This is among the outstanding expertise of extracting solutions even within the huge volumes of textual content.

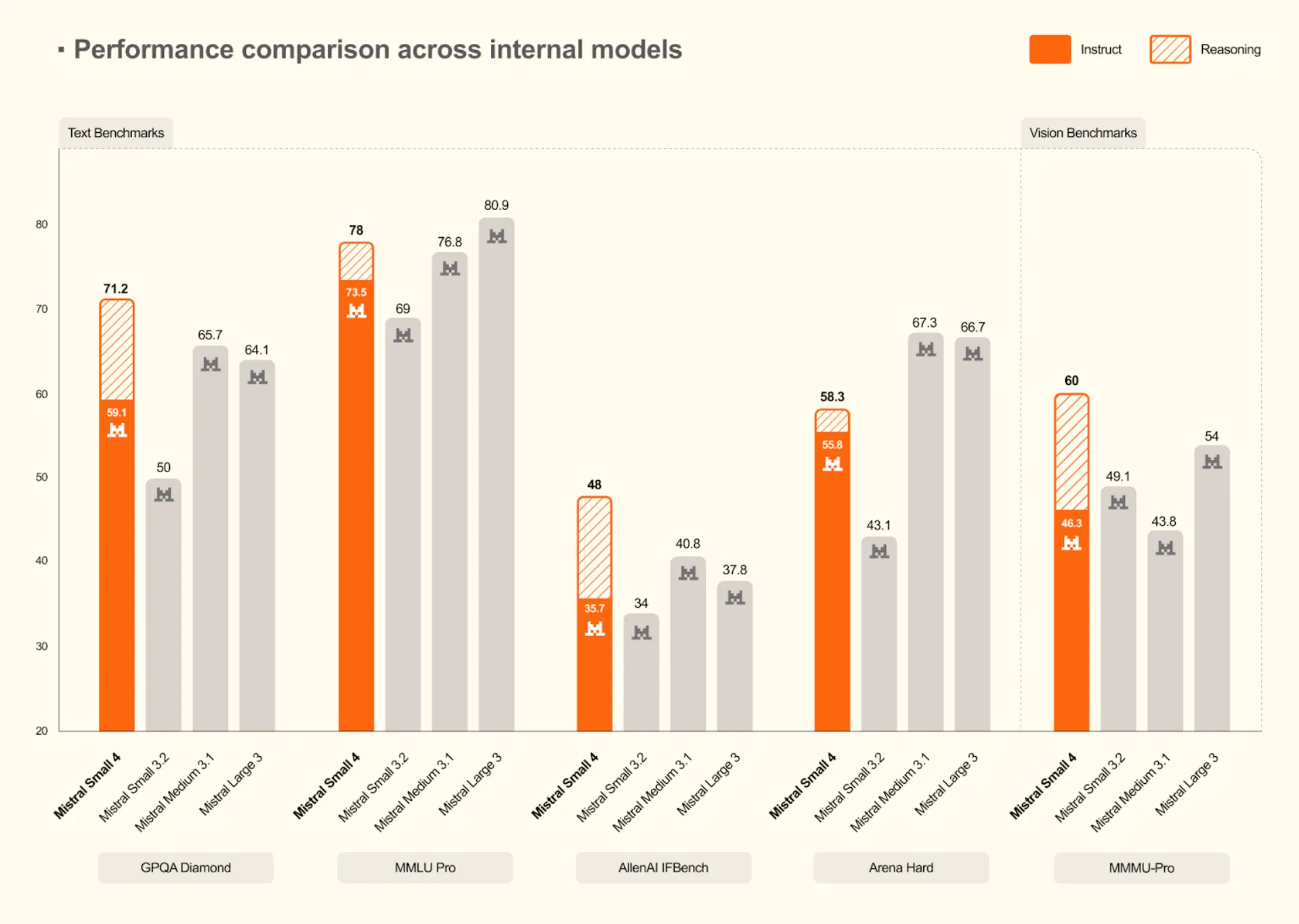

A Generational Leap for Mistral

Comparisons with the opposite fashions reveal that Mistral Small 4 is a large enchancment over the previous fashions. It constantly achieves new inner requirements on textual content and imaginative and prescient requirements.

- Larger Reasoning: It tops the Mistral fashions on tough textual content assessments with a rating of 71.2 on GPQA Diamond and 78 on MMLU Professional.

- Imaginative and prescient Capabilities: The mannequin additionally performs higher in imaginative and prescient duties with a rating of 60 in MMMU-Professional, which is larger than the sooner fashions, comparable to Mistral Small 3.2 and Medium 3.1.

Mistral Small 4 could be very aggressive with, and at instances performs even higher than, bigger inner fashions comparable to Magistral Medium 1.2 on difficult benchmarks when its high-reasoning mode is used. This truth helps the conclusion that Mistral Small 4 is as able to assembly its declare of providing the very best in reasoning and coding expertise in a handy bundle.

Arms-on with Mistral Small 4: Sensible Duties

Earlier than palms on lets perceive the way to entry the Mistral Small 4.

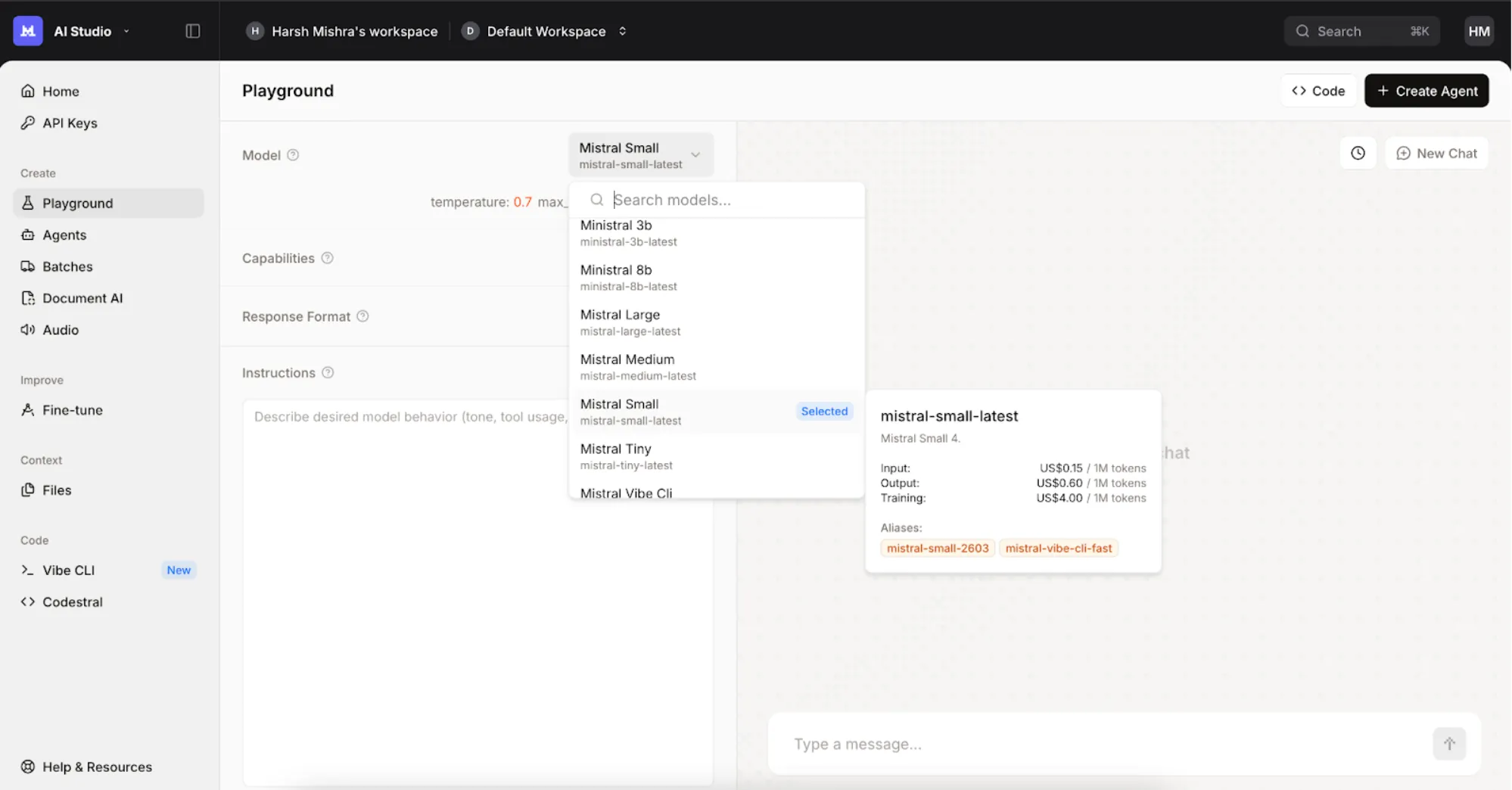

- First, go to https://console.mistral.ai/ and join utilizing your cellular quantity.

- Now head over to the “Playground”.

- Then choose the ‘Mistral Small Newest’ from the fashions checklist.

- You might be prepared to make use of the mannequin.

Benchmarks and numbers inform a part of the story, however one of the best ways to know a mannequin is to see it carry out. We examined Mistral Small 4 on three widespread, real-world duties to guage its reasoning, coding, and writing expertise.

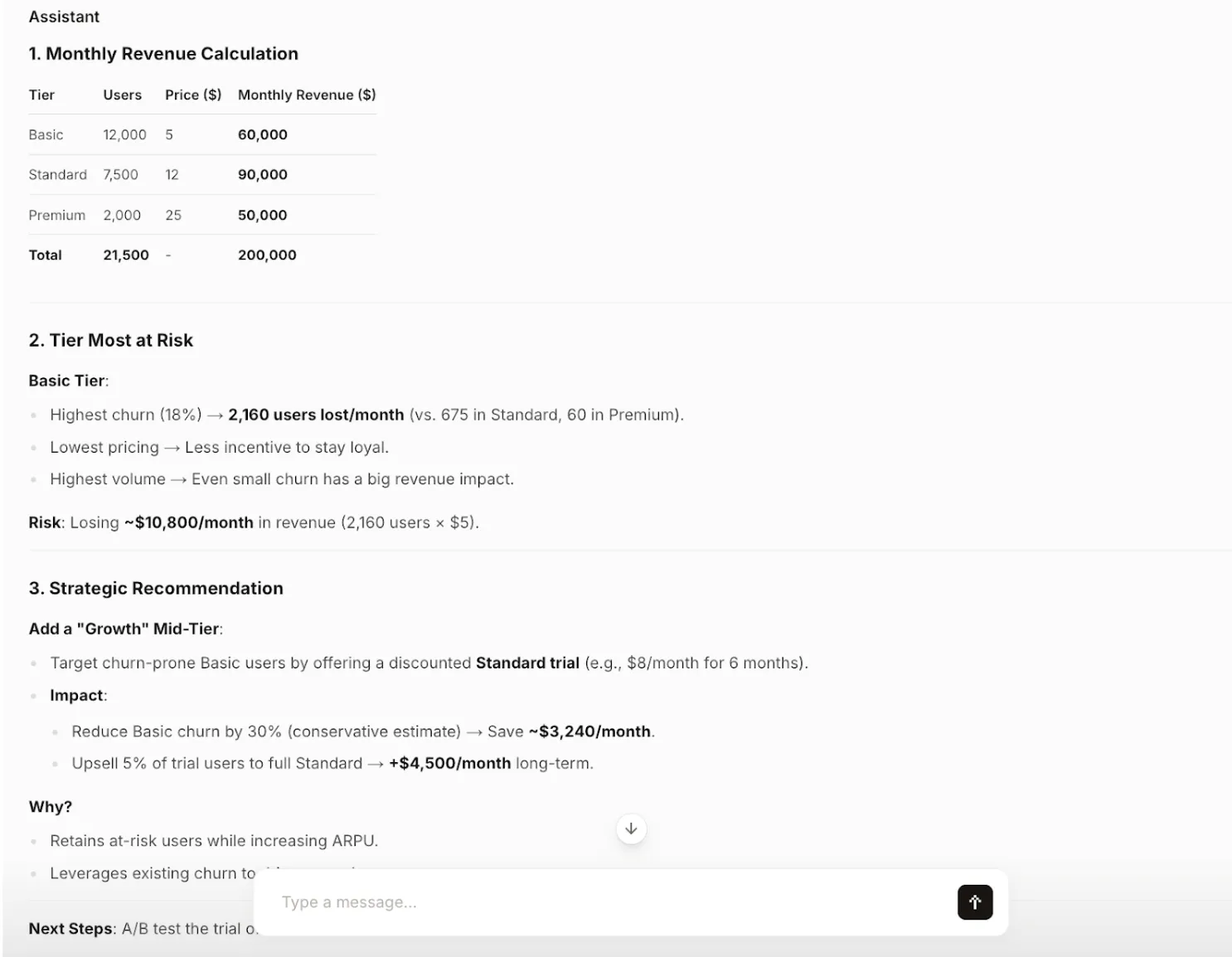

Activity 1: Structured Enterprise Reasoning

Goal: Check the mannequin’s skill to carry out calculations, establish dangers, and supply a strategic suggestion primarily based on enterprise information—all whereas sustaining a structured and concise format.

Immediate:

You’re a product strategist.

A SaaS firm has three subscription tiers:

Fundamental: 12,000 customers at $5/month with 18% churn

Normal: 7,500 customers at $12/month with 9% churn

Premium: 2,000 customers at $25/month with 3% churn

Duties:

- Calculate month-to-month income for every tier

- Establish which tier is most in danger

- Advocate one strategic change

- Maintain the reply structured and concise

Output:

Evaluation: The mannequin accurately performs the calculations and identifies the Fundamental tier as the first danger as a consequence of its excessive churn fee. The strategic suggestion shouldn’t be solely artistic but additionally backed by clear, data-driven reasoning and consists of actionable subsequent steps. The output is completely structured and concise, following all directions.

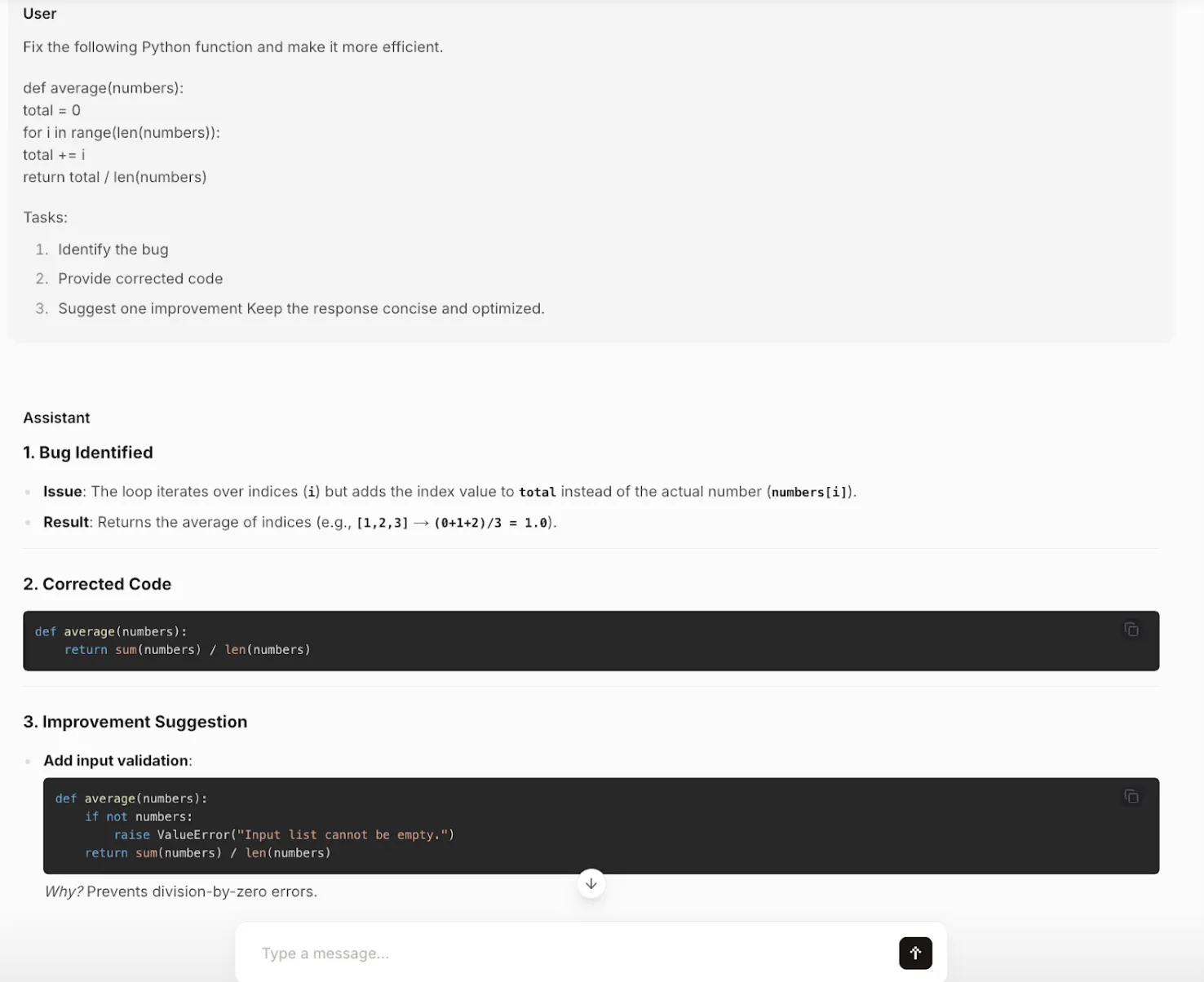

Activity 2: Environment friendly and Clear Coding

Goal: Check the mannequin’s coding skills, particularly its capability to establish a logical bug, present a corrected and extra environment friendly answer, and recommend additional enhancements.

Immediate:

Repair the next Python operate and make it extra environment friendly.

def common(numbers):

complete = 0

for i in vary(len(numbers)):

complete += i

return complete / len(numbers)

Duties:

- Establish the bug

- Present corrected code

- Counsel one enchancment

Maintain the response concise and optimized.

Output:

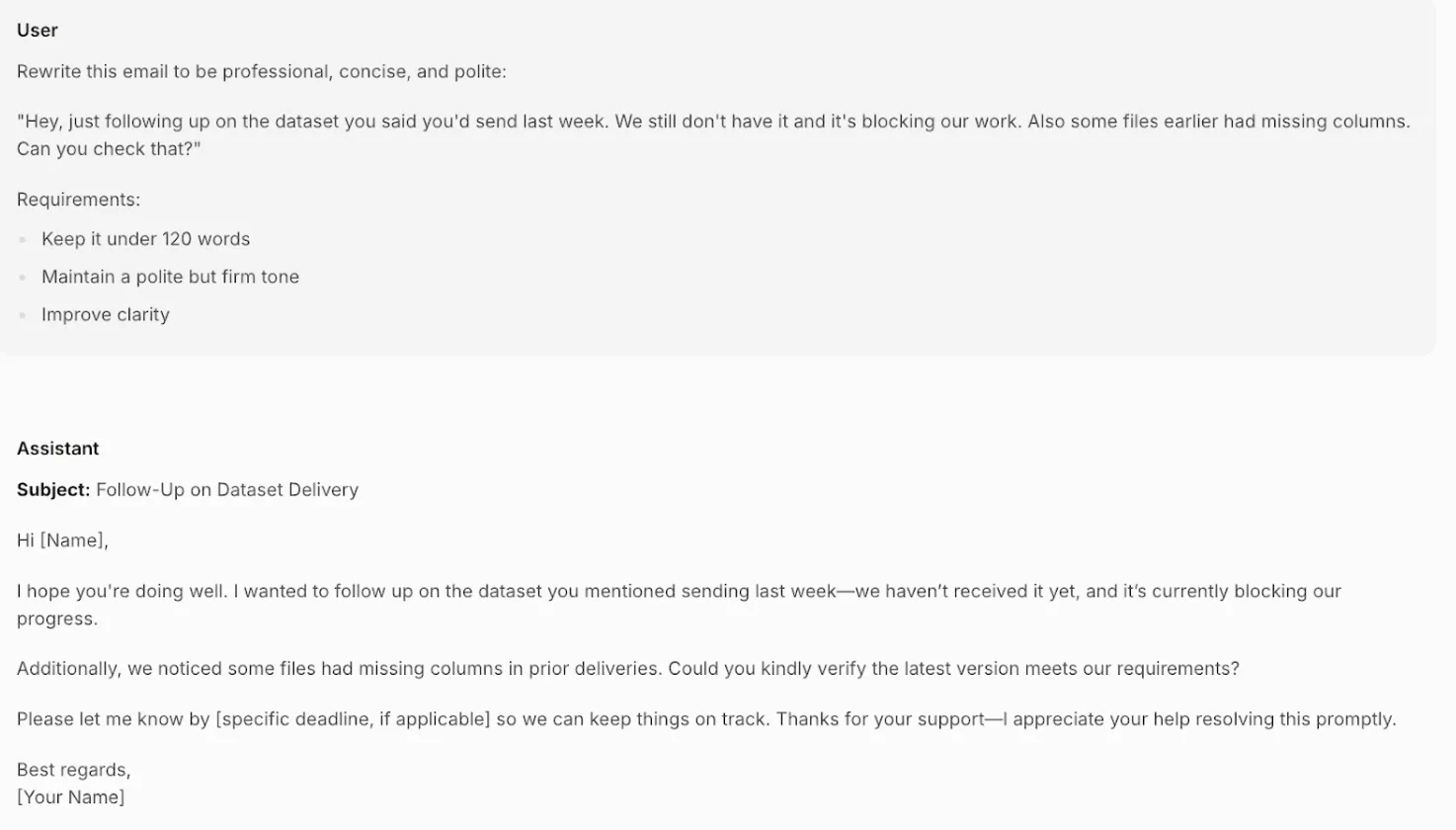

Activity 3: Skilled Electronic mail Writing

Goal: Check the mannequin’s real-world writing expertise by rewriting an off-the-cuff, barely aggressive e mail into an expert, well mannered, and clear message whereas adhering to a phrase rely.

Immediate:

Rewrite this e mail to be skilled, concise, and well mannered:

“Hey, simply following up on the dataset you stated you’d ship final week. We nonetheless don’t have it and it’s blocking our work. Additionally some recordsdata earlier had lacking columns. Are you able to examine that?”

Necessities:

- Maintain it below 120 phrases

- Keep a well mannered however agency tone

- Enhance readability

Output:

Evaluation: The mannequin transforms the unique message completely. It replaces the blunt, accusatory tone with an expert and well mannered one (“I hope you’re doing effectively,” “Might you kindly confirm”). It clearly states the issue (blocked progress) and provides a placeholder for a deadline, making the request agency however respectful. The e-mail is effectively below the phrase restrict and demonstrates a nuanced understanding {of professional} communication.

How Does It Evaluate to Its Friends?

Mistral Small 4 will get into the aggressive market. This can be a transient overview of the efficiency comparability between it and different fashions within the parameter vary of roughly 120B.

- vs. GPT-OSS 120B: Mistral claims Small 4 as a straight rival, asserting that it’s as profitable as GPT-OSS on essential metrics and generates shorter and extra environment friendly outcomes. This interprets to decreased latency and value in manufacturing.

- vs. Qwen3.5-122B-A10B: Each of those fashions are giant context home windows and high-performance oriented. The Apache 2.0 open license supplied by Mistral could be one of many the explanation why the enterprise will take into account it to have the suitable of business use.

- vs. NVIDIA Nemotron 3 Tremendous 120B: NVIDIA has been liable to offering detailed documentation on coaching information on its base fashions. A person who values the openness of the coaching corpus might fall on the aspect of Nemotron, however Mistral provides extra particular recommendation on deployment {hardware}.

The concept right here is that though the energetic parameters make the compute value per token smaller, they’re nonetheless giant fashions. In accordance with the {hardware} suggestions of Mistral itself, to run it productively in situations with lengthy context duties is a multi-GPU affair.

Conclusion

Mistral Small 4 is not only one other large mannequin. It’s a well-considered framework designed to deal with a real-world difficulty, particularly the issue of managing a number of specialised AI fashions. It brings chat, reasoning, and coding collectively into one, environment friendly endpoint, and that may be a very enticing supply to the builders and companies. With good efficiency and multimodal capabilities together with its open-weights method, it’s a powerful competitor within the AI world.

Though it doesn’t wave a magic wand to make the usage of highly effective {hardware} pointless, its architectural efforts, in addition to its emphasis on output effectivity, are a considerable transfer in direction of enchancment. To people who’re enthusiastic about developing the superior but reasonably priced AI purposes, Mistral Small 4 will definitely be a case to be adopted and to develop with.

Regularly Requested Questions

Its key energy is to combine the ability of a chat mannequin, a reasoning mannequin, and a coding mannequin in a single, environment friendly endpoint. This ensures simpler improvement and fewer overhead in operation.

Sure, its weights are launched below the Apache 2.0 license, which allows industrial use. This makes it a robust possibility for companies seeking to construct on an open-weight basis.

The minimal {hardware} steered by Mistral is 4 H100 GPUs or comparable. They suggest a extra elaborate, disaggregated configuration within the case of high-throughput, long-context workloads.

Sure, it’s multimodal and is able to taking textual content enter and picture enter, the place its Pixtral imaginative and prescient stack would analyze the picture.

Mistral asserts to outperform and even exceed comparable fashions comparable to GPT-OSS 120B on quite a few benchmarks, and has the added benefit of manufacturing shorter and extra environment friendly outputs, probably leading to decreased latency and value.

Login to proceed studying and luxuriate in expert-curated content material.

![6 New Features of Grok Imagine 1.0 [MUST TRY] 6 New Features of Grok Imagine 1.0 [MUST TRY]](https://cdn.analyticsvidhya.com/wp-content/uploads/2026/02/Grok-Imagine-1.0-is-Here-Make-10-seconds-720p-videos-with-Super-Fine-Audio-1.png)