Introduction to Nvidia’s Latest AI Chip Launch

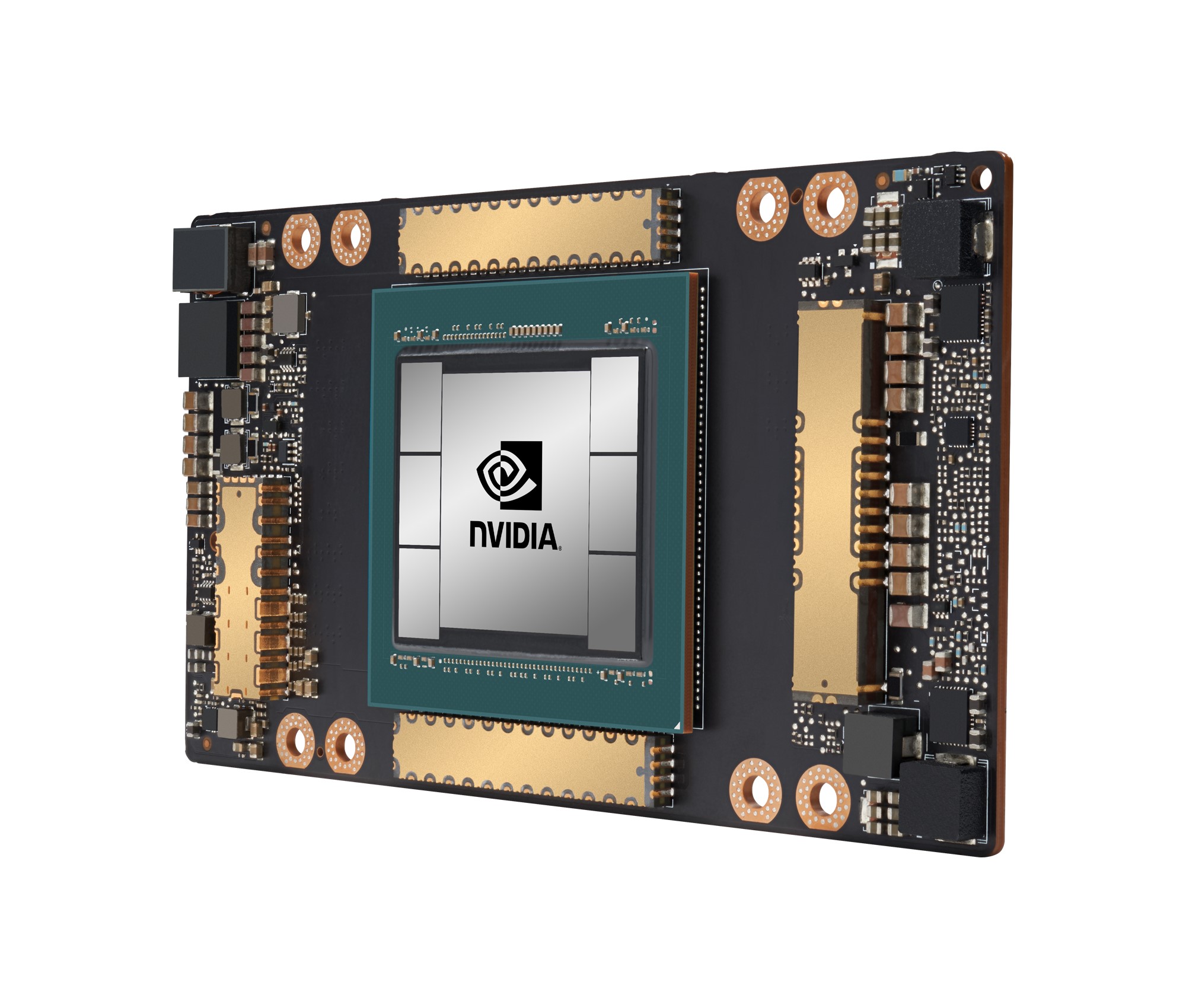

Nvidia Launches New AI Chips Designed for Next-Gen Data Centers, reinforcing its dominance at the heart of the global artificial intelligence boom. With AI workloads growing exponentially, Nvidia has unveiled powerful new chips engineered specifically to meet the demands of modern, AI-first data centers.

These chips are built to handle massive-scale training and inference for generative AI, machine learning, and advanced analytics—workloads that traditional CPUs and older GPUs struggle to manage efficiently.

Why Next-Gen Data Centers Need New AI Chips

Data centers are no longer just storage and networking hubs. They have become AI factories—processing enormous datasets, training large language models, and delivering real-time AI services worldwide.

Key challenges driving demand for new AI chips include:

- Explosive growth in generative AI workloads

- Rising energy and cooling costs

- Need for lower latency and higher throughput

- Demand for scalable, modular AI infrastructure

Nvidia’s new chips directly target these pressure points.

Overview of Nvidia’s New AI Chip Architecture

Massive Performance and Compute Gains

The new AI chips deliver dramatic improvements in compute density, memory bandwidth, and interconnect speed. This enables faster model training and more efficient parallel processing—critical for large-scale AI systems.

By packing more AI power into fewer servers, data centers can achieve higher performance while reducing physical footprint.

Energy Efficiency and Sustainability Focus

Energy efficiency is a core design priority. Nvidia’s chips are optimized to deliver more performance per watt, helping data centers control power consumption and reduce carbon emissions—an increasingly important factor for global operators.

AI Chips Optimized for Generative AI and LLMs

Training Large Language Models at Scale

Training large language models requires enormous computational resources and fast communication between GPUs. Nvidia’s latest chips feature advanced interconnects that allow thousands of GPUs to work together seamlessly.

This dramatically shortens training times for complex models used in chatbots, search, healthcare, and enterprise automation.

Faster and Cheaper AI Inference

Inference—running trained models in production—is where costs can skyrocket. The new chips are optimized for high-throughput inference, enabling faster responses at lower operational cost for AI-powered applications.

Impact on Cloud Service Providers

Hyperscalers and cloud platforms rely heavily on Nvidia hardware to deliver AI services. These new chips allow providers to:

- Offer more powerful AI instances

- Reduce infrastructure costs per workload

- Support larger and more complex customer models

As a result, cloud customers gain access to enterprise-grade AI capabilities without building their own infrastructure.

Benefits for Enterprises and Data Center Operators

Enterprises deploying private or hybrid data centers benefit from:

- Higher AI performance with fewer servers

- Lower energy and cooling requirements

- Faster deployment of AI-driven applications

- Improved ROI on AI investments

This makes advanced AI accessible beyond tech giants.

How Nvidia Stays Ahead of Competitors

Nvidia’s advantage goes beyond hardware. The company tightly integrates chips with networking, system design, and software—creating a full-stack AI platform that competitors struggle to match.

Its rapid innovation cycle and close partnerships with cloud providers further reinforce its leadership position.

Role of Software and AI Ecosystem

Nvidia’s AI chips are deeply integrated with its software ecosystem, including optimized AI frameworks, developer tools, and libraries. This reduces friction for developers and accelerates adoption across industries.

The combination of hardware and software creates a powerful flywheel effect.

Implications for the Global AI Infrastructure Race

As nations and enterprises race to build AI capacity, advanced chips become strategic assets. Nvidia’s launch strengthens its role as a critical supplier in the global AI infrastructure race—powering everything from startups to national AI initiatives.

Challenges and Considerations

Despite the excitement, challenges remain:

- High capital costs for cutting-edge AI hardware

- Supply chain constraints and demand pressure

- Need for skilled engineers to operate AI data centers

Organizations must plan carefully to maximize value from these technologies.

FAQs

Q1: Are these AI chips only for large cloud providers?

No, enterprises and research institutions can also deploy them in private data centers.

Q2: Do the new chips reduce AI operating costs?

Yes, improved efficiency lowers power and infrastructure costs over time.

Q3: Are these chips designed for generative AI?

Absolutely. Generative AI and large language models are key use cases.

Q4: Will these chips replace older Nvidia GPUs?

They will complement and gradually replace older generations for high-end workloads.

Q5: How do they impact AI inference performance?

They significantly improve speed and reduce latency at scale.

Q6: Will Nvidia release more AI chips soon?

Nvidia follows a rapid innovation cycle, so continued updates are expected.

Conclusion

Nvidia Launches New AI Chips Designed for Next-Gen Data Centers marks a defining moment in the evolution of AI infrastructure. By delivering unprecedented performance, efficiency, and scalability, Nvidia is enabling the next wave of AI innovation—powering smarter applications, faster breakthroughs, and a more sustainable data center future.