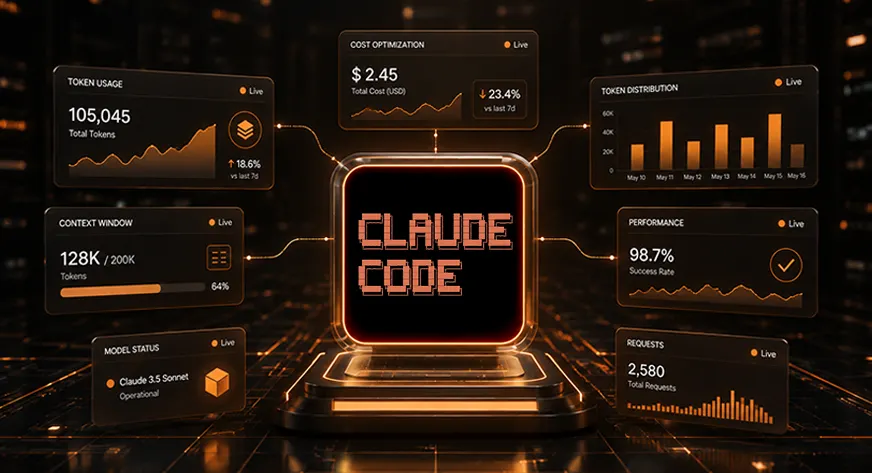

Utilizing Claude Code in massive initiatives can result in skyrocketing token prices. A 2025 Stanford study reveals builders waste 1000’s of tokens every day, draining budgets as unchecked context limits pile up. By setting strict boundaries from the outset, groups can scale back prices with out compromising code high quality. Optimizing token utilization and context window sizes early on ensures effectivity and retains initiatives on monitor. On this article, we’ll break down the important thing steps to take to avoid wasting Claude Code tokens and handle your API prices.

The Core Idea

As your chat context expands, so do token prices. This consists of not solely file reads and command outputs but additionally system directions and chat historical past. In response to Anthropic, token prices enhance because the context dimension grows. To keep away from pointless bills, it’s essential to maintain your working context compact. By optimizing your context window sizes from the beginning, you may higher handle token utilization and maintain prices in test throughout initiatives.

Excessive-Influence Ways for Context Administration

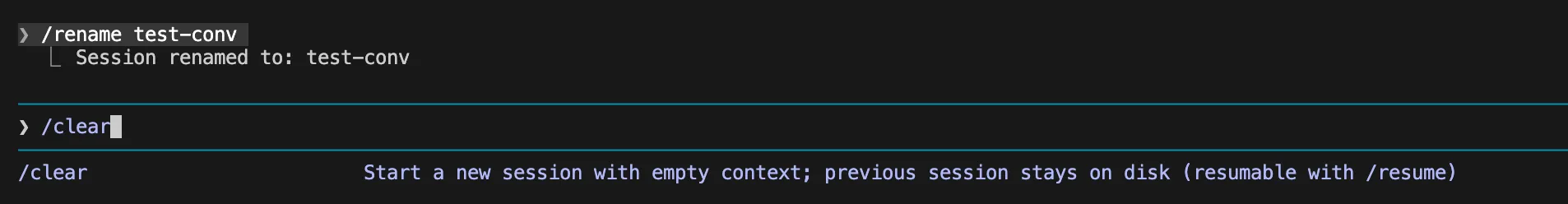

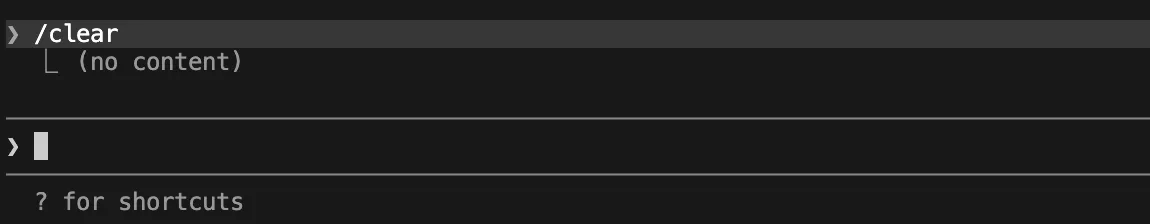

1. Clear the Chat Between Duties

Clear your chat when switching duties. Sort /clear to start out a contemporary session. This prevents outdated debugging logs from losing tokens. You scale back Claude Code value by beginning contemporary.

Use:

/rename auth-debug-apr30

/clear

Resume later:

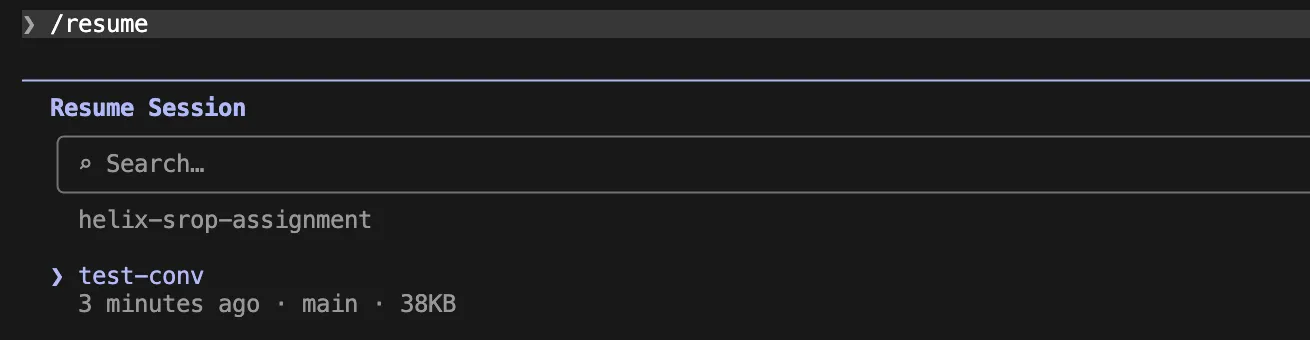

/resume

2. Compact the Context for Continuity

Use the /compact command for lengthy duties. This motion summarizes the chat. It retains the thread however drops outdated information. This boosts Claude Code token saving efforts.

Add customized directions to CLAUDE.md:

# Compact directionsWhen compacting, protect:

- present activity aim

- recordsdata modified

- instructions already run

- failing exams and actual errors

- choices made

- subsequent motion checklistDrop:

- outdated exploration paths

- repeated logs

- irrelevant dialogue

Within the Claude code use

/compact

3. Decrease the Auto-Compact Threshold

Compact the chat prior to the default restrict. Claude compacts close to 95 p.c capability. Set an override to 70 for regular work.

export CLAUDE_AUTOCOMPACT_PCT_OVERRIDE=70Use 50 for noisy workflows.

export CLAUDE_AUTOCOMPACT_PCT_OVERRIDE=50This tactic helps you handle token utilization.

4. Monitor Utilization Metrics

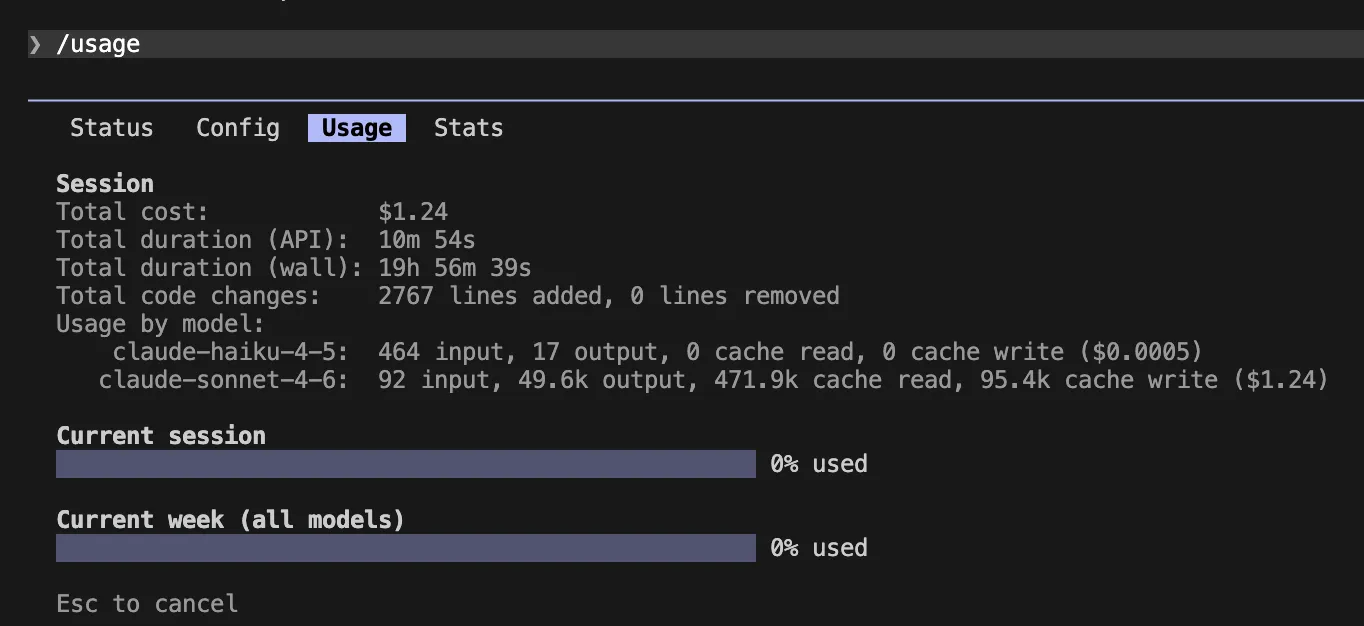

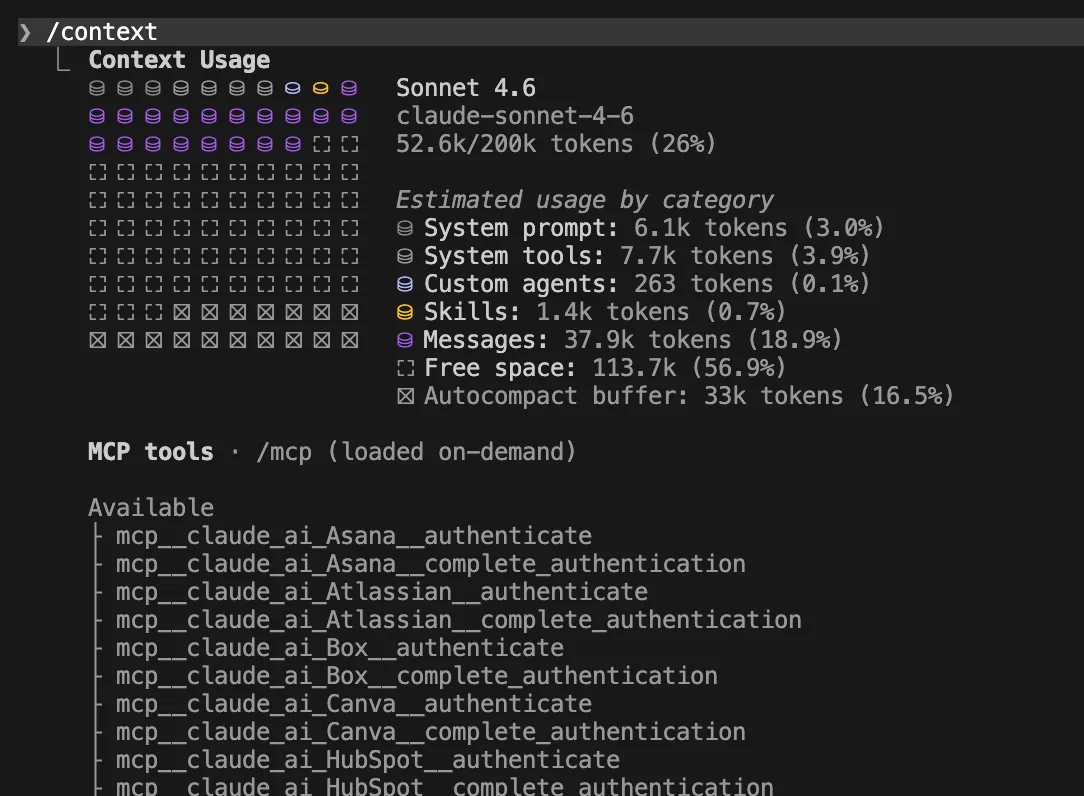

Watch your limits with particular instructions. Sort /context to see what consumes area. Sort /utilization to trace your session spend. Run these earlier than massive duties to optimize context window area.

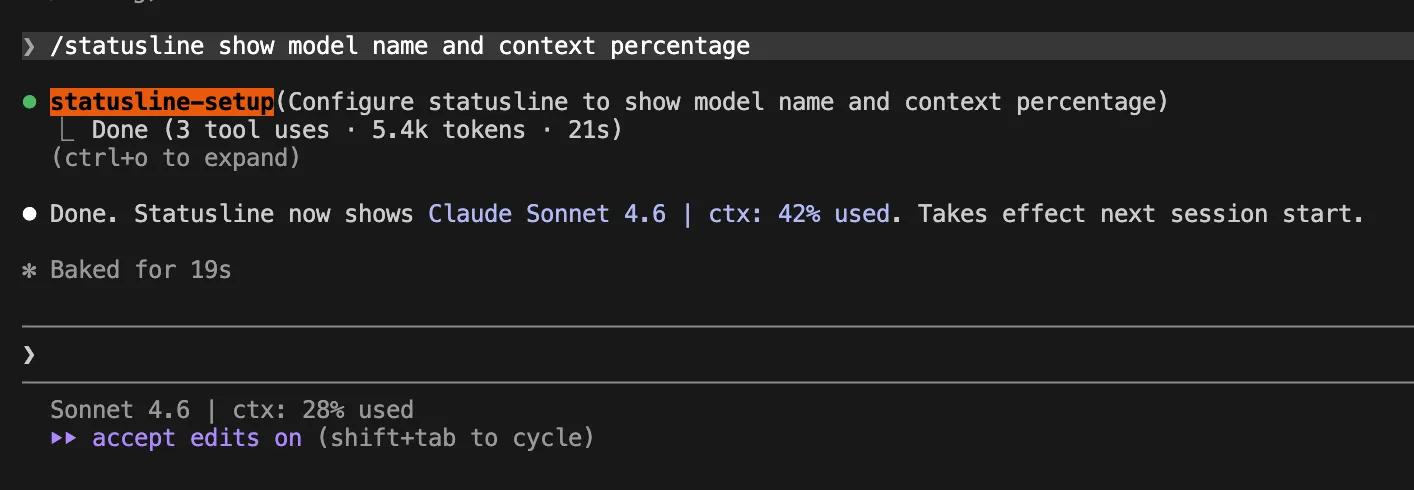

5. Add a Stay Standing Line

Add a standing line to your terminal. This reveals reside context share and mannequin prices. It prevents sudden token spikes. This improves your AI coding assistant expertise.

Use this JSON configuration in ~/.claude/settings.json file

{

"statusLine": {

"sort": "command",

"command": "jq -r '"[(.model.display_name)] (.context_window.used_percentage // 0)% context"'"

}

}

Or you may have Claude Code create this for you robotically by working this command contained in the Claude Code chat:

/statusline present mannequin title and context share

Additionally Learn: Prime 28 Claude Shortcuts that can 10X your Velocity

Instruction and File Optimization

6. Shrink Your World Directions

Maintain your major instruction file brief. Anthropic suggests retaining CLAUDE.md below 200 strains. Large recordsdata value tokens each session. Retailer solely essential information there. This technique improves Claude Code token saving.

# Challenge necessities- Package deal supervisor: pnpm

- Check command: pnpm take a look at

- Typecheck: pnpm typecheck

- Major app code: src/

- API handlers: src/api/

- Don't edit generated recordsdata in src/generated/

7. Use Path-Scoped Guidelines

Use path-scoped guidelines as an alternative of worldwide ones. Place particular guidelines in folders. These load solely when Claude edits matching recordsdata. You scale back Claude Code value by hiding irrelevant directions.

---

paths:

- "src/api/**/*.ts"

---# API guidelines

- Validate all request inputs.

- Use the usual error response form.

- Add exams for authorization failures.

To make use of path-scoped guidelines in Claude Code, you need to add them to a markdown file inside the .claude/guidelines/ listing of your challenge.

Create a brand new .md file inside the foundations folder. A typical naming conference is to call it after the subsystem it governs:

.claude/guidelines/api-validation.md (or any title ending in .md).

8. Isolate Specialised Workflows

Transfer specialised workflows into distinct expertise. Abilities load on demand. Add a disable flag to cover them till wanted. This retains the immediate clear. It helps you handle token utilization.

You’ll be able to add Claude SKILL in .claude/expertise/

---

title: fix-issue

description: Repair a GitHub situation by quantity

disable-model-invocation: true

allowed-tools: Bash(gh *) Bash(pnpm take a look at *) Learn Grep Edit

---Repair GitHub situation $ARGUMENTS.

Steps:

1. Use gh situation view to learn the difficulty.

2. Determine the smallest related recordsdata.

3. Write or replace exams first.

4. Implement the repair.

5. Run the focused take a look at.

6. Summarize recordsdata modified.

Invoke it utilizing:

/fix-issue 1239. Desire CLI Instruments

Desire CLI instruments over server instruments. Anthropic favors commonplace instruments over MCP servers. CLI instruments trigger much less overhead. Disable unused MCP servers without delay. This streamlines your AI coding assistant.

Good immediate:

Use gh to examine PR 42 and return solely the failing test names.

10. Cap Server Output

Cap your instrument output sizes. Software outputs flood your chat context. Set the utmost restrict to 8000. You optimize context window area this fashion.

export MAX_MCP_OUTPUT_TOKENS=800011. Cap Terminal Output

Cap your terminal command output. Lengthy take a look at logs drain tokens quick. Set the bash output size to 20000. This secures Claude Code token saving.

export BASH_MAX_OUTPUT_LENGTH=2000012. Filter Logs

Filter log outputs earlier than Claude sees them. Don’t feed uncooked logs into the chat. Use fundamental instructions to extract error strains. This step helps scale back Claude Code value.

pnpm take a look at 2>&1 | grep -A 5 -E "FAIL|ERROR|Error|failed" | head -120If you wish to begin a full session with the filtered logs pre-loaded into the context, pipe the output into the usual claude command.

Begin the Claude Code with the next command

pnpm take a look at 2>&1 | grep -A 5 -E "FAIL|ERROR|Error|failed" | head -120 | claude

Mannequin and Agent Methods

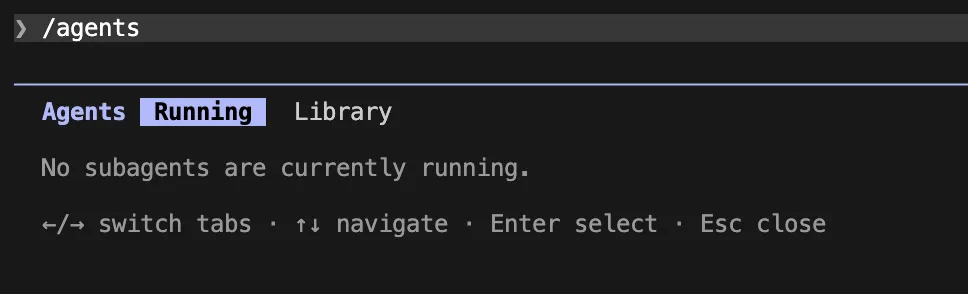

13. Deploy Subagents

Deploy subagents for verbose analysis duties. Subagents deal with heavy studying in an remoted area. They return clear summaries to the principle chat. This helps you handle token utilization.

Use a subagent to examine the failing auth exams and logs. Return solely:

1. failing take a look at names

2. doubtless root trigger

3. recordsdata that want edits

4. shortest repair plan

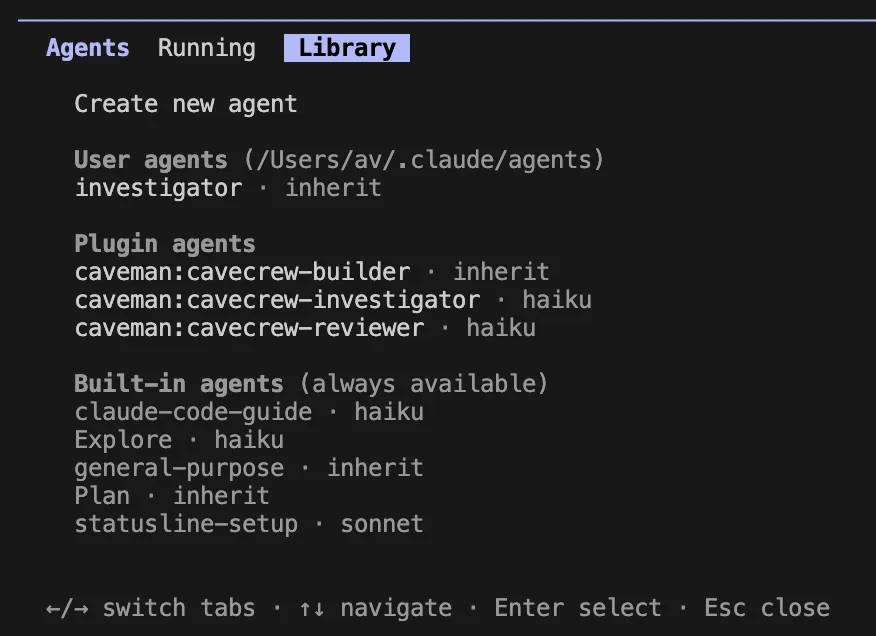

Should you carry out let’s say an investigator activity continuously, you may outline a everlasting subagent by making a MD file at .claude/brokers/investigator.md

After saving, you may merely sort /investigator "auth exams are failing" to set off the workflow.

Or just you should utilize Claude to generate this

Use /brokers in Claude Code.

Press left key to go to Library and choose create new agent.

Then choose Private or Challenge Scope after which Generate with Claude.

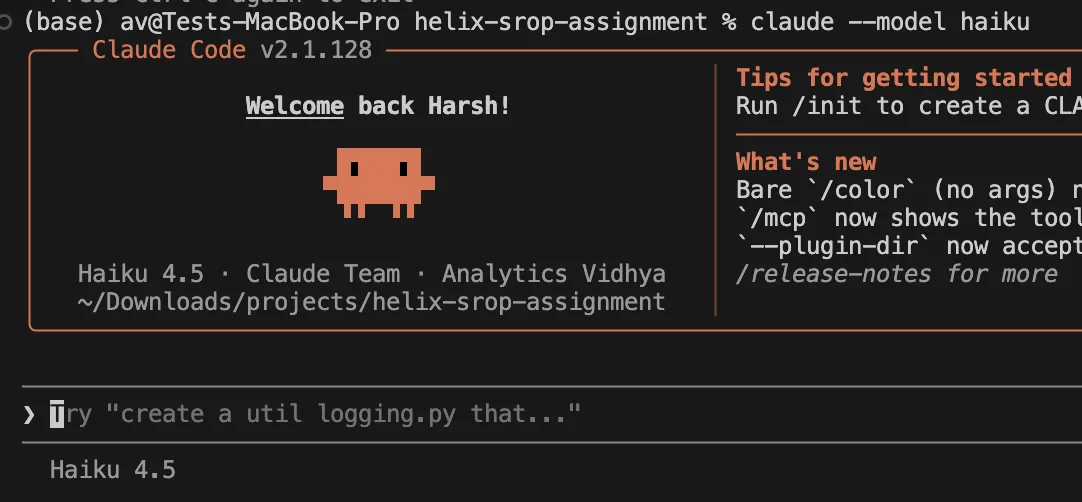

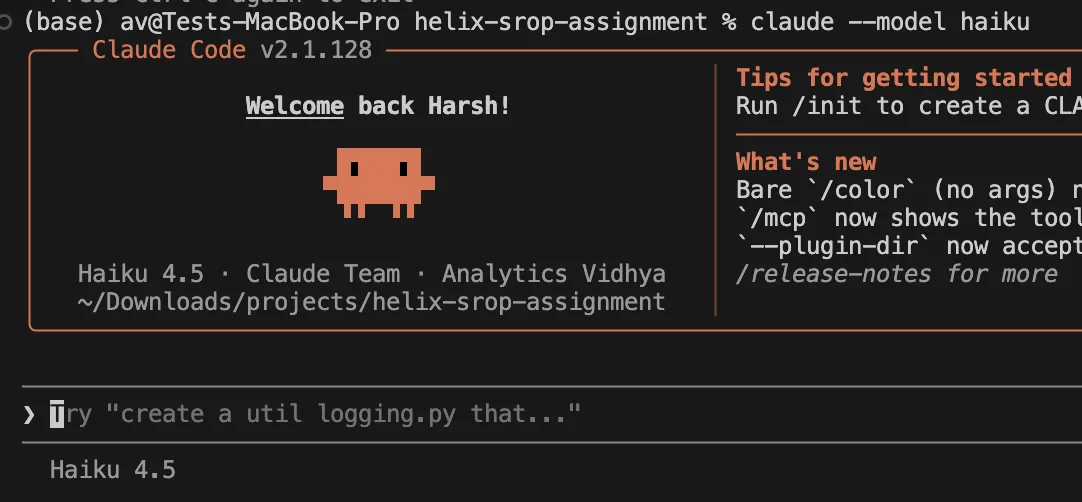

14. Choose Cheaper Fashions

Choose cheaper fashions for traditional work. Sonnet handles most every day coding duties. It prices lower than Opus. Reserve Opus for deep architectural reasoning. This matches a sensible AI coding assistant workflow.

claude --model haiku

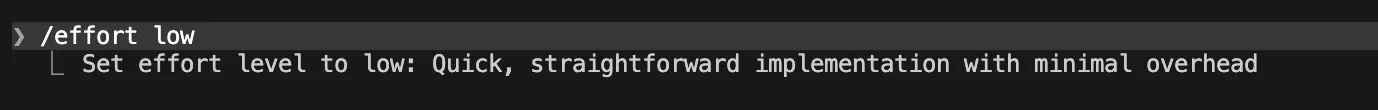

15. Decrease the Effort Degree

Decrease the hassle degree for easy duties. Low effort runs quick and prices much less. Use medium effort for traditional coding. Keep away from the max setting. This helps Claude Code token saving.

/effort low

16. Disable Prolonged Pondering

Disable prolonged considering for easy edits. Pondering tokens rely as output tokens. Set a strict token cap for fundamental duties. You scale back Claude Code value so much this fashion.

export CLAUDE_CODE_DISABLE_THINKING=117. Use Code Plugins

Set up code intelligence plugins for typed languages. These plugins present correct image navigation. Claude skips studying irrelevant recordsdata. You optimize context window limits with this tactic.

File Entry and Workflow Management

18. Deny Noisy Recordsdata

Deny entry to noisy challenge recordsdata. Edit your native settings file. Block entry to logs and construct folders. Claude can’t uncover these ignored recordsdata. This protects your AI coding assistant course of.

Open ~/.claude/settings.json and Merge the JSON into your current file

{

"permissions": {

"deny": [

"Read(./.env)",

"Read(./.env.*)",

"Read(./secrets/**)",

"Read(./node_modules/**)",

"Read(./dist/**)",

"Read(./build/**)",

"Read(./coverage/**)",

"Read(./.next/**)",

"Read(./tmp/**)",

"Read(./logs/**)",

"Read(./*.log)"

]

}

}

19. Keep away from Broad Scans

Don’t ask Claude to learn the entire repository. Obscure prompts set off large file scans. Give actual file names as an alternative. This easy rule helps handle token utilization.

Good immediate:

The login redirect fails. Begin with src/auth/session.ts. Learn solely associated recordsdata.

20. Present Verification Targets

Present verification targets up entrance. Inform Claude methods to test its work. Present anticipated outputs and actual take a look at names. This prevents correction loops and aids Claude Code token saving.

21. Course-Appropriate the Mannequin

Course-correct the mannequin early within the course of. Interrupt Claude if it reads irrelevant recordsdata. Rewind the session to a secure level. You scale back Claude Code value by stopping dangerous paths.

22. Use a Shorter System Immediate

Use a shorter system immediate for Opus 4.7. Allow this hidden setting with care. It drops lengthy instrument descriptions. This trick helps optimize context window area.

export CLAUDE_CODE_SIMPLE_SYSTEM_PROMPT=123. Take away Git Directions

Take away built-in git guidelines if wanted. Disable default git flows. Do that provided that you utilize customized workflows. It shrinks the baseline immediate in your AI coding assistant.

export CLAUDE_CODE_DISABLE_GIT_INSTRUCTIONS=1Really useful Configurations

Use this native setup for traditional coding duties:

{

"permissions": {

"deny": [

"Read(./.env)",

"Read(./.env.*)",

"Read(./secrets/**)",

"Read(./node_modules/**)",

"Read(./dist/**)",

"Read(./build/**)",

"Read(./coverage/**)",

"Read(./.next/**)",

"Read(./tmp/**)",

"Read(./logs/**)",

"Read(./*.log)"

]

},

"env": {

"CLAUDE_AUTOCOMPACT_PCT_OVERRIDE": "70",

"BASH_MAX_OUTPUT_LENGTH": "20000",

"MAX_MCP_OUTPUT_TOKENS": "8000",

"CLAUDE_CODE_EFFORT_LEVEL": "medium"

}

}Use this setup for aggressive financial savings:

{

"env": {

"CLAUDE_AUTOCOMPACT_PCT_OVERRIDE": "50",

"BASH_MAX_OUTPUT_LENGTH": "12000",

"MAX_MCP_OUTPUT_TOKENS": "5000",

"CLAUDE_CODE_EFFORT_LEVEL": "low"

}

}Optimum Immediate Template

Observe this template format to avoid wasting tokens:

Process: Repair [specific bug] in [specific files].Scope:

- Begin with: [file1], [file2]

- Don't scan the entire repo.

- Solely learn further recordsdata if they're imported.Token self-discipline:

- Maintain command output brief.

- Filter take a look at output to failures solely.

- Summarize findings earlier than modifying.

- If context exceeds 70%, compact the chat.Verification:

- Add or replace focused exams.

- Run solely the related take a look at file first.

- Run broader exams after the focused take a look at passes.

Issues to Keep away from

- Don’t depend on outdated ignore recordsdata. The system deprecates these outdated settings. Use the deny permissions setting as an alternative.

- Don’t set up each out there plugin. Further plugins add fixed overhead. Disable unused instruments to take care of velocity.

- Don’t all the time default to the costliest mannequin. Use Opus for advanced duties. Depend on Sonnet in your every day workflow.

Additionally Learn: Claude Abilities Defined: Use Customized Abilities on Claude Code

Conclusion

Taking management of your instruments builds confidence in your challenge and helps safe your funds. Managing token utilization correctly sharpens your AI assistant and makes improvement extra environment friendly and cost-effective. Groups that optimize context window area can scale back API prices considerably. Setting clear boundaries: like clearing chats, limiting file entry, and writing concise prompts, results in actual financial savings. By making use of these methods to your subsequent challenge, you’ll enhance each your funds and code high quality.

Often Requested Questions

A. Sort the /clear command in your terminal. This drops all earlier context and begins contemporary.

A. Obscure prompts set off large codebase scans. Present exact file names to limit the search scope.

A. Set the BASH_MAX_OUTPUT_LENGTH restrict in your setting. Filter take a look at outputs with commonplace bash instruments.

Login to proceed studying and luxuriate in expert-curated content material.