AGIBOT compares GO-2’s efficiency towards different main fashions. | Supply: AGIBOT

AGIBOT at the moment launched GO-2, its next-generation basis mannequin for embodied AI. The corporate mentioned GO-2 bridges the “final mile” from logical reasoning to specific execution inside a unified structure.

Constructing on its predecessor, GO-1, GO-2 introduces a unified structure that integrates logical reasoning and motion execution inside a single system. This allows AI robots not solely to plan accurately but additionally to execute reliably in real-world environments, mentioned AGIBOT.

GO-2 brings collectively tens of hundreds of hours of interplay knowledge, claimed the corporate, marking a transition from “black-box exploration” to a “true unity of reasoning and motion.”

GO Sequence evolves from notion to actuation

A 12 months in the past, AGIBOT launched the Genie Operator-1 (GO-1) basis mannequin. That includes the ViLLA structure, it unified modeling of imaginative and prescient, language, and motion. At this time, AGIBOT built-in the mannequin into its one-stop embodied improvement platform, Genie Studio, empowering customers to deploy fashions and validate them in large-scale real-world functions.

GO-1 taught robots to “perceive.” It might interpret directions, acknowledge scenes, and plan duties, mentioned the corporate. Nevertheless, as techniques entered extra complicated real-world environments, a crucial subject emerged: even with an affordable plan, the robotic’s actions didn’t all the time strictly adhere to it.

This isn’t a failure of planning; it’s a fracture between reasoning and execution, asserted AGIBOT. It mentioned the core trigger is a long-standing problem in robotics: the “semantic-actuation hole.”

In conventional vision-language-action (VLA) fashions, the high-level reasoning indicators and real-world motor instructions stay disconnected. Throughout execution, management modules usually bypass reasoning indicators, resulting in gathered errors in long-horizon duties and decreased system stability, famous the Shangha-based firm.

GO-2 achieves ‘unity of reasoning and motion’

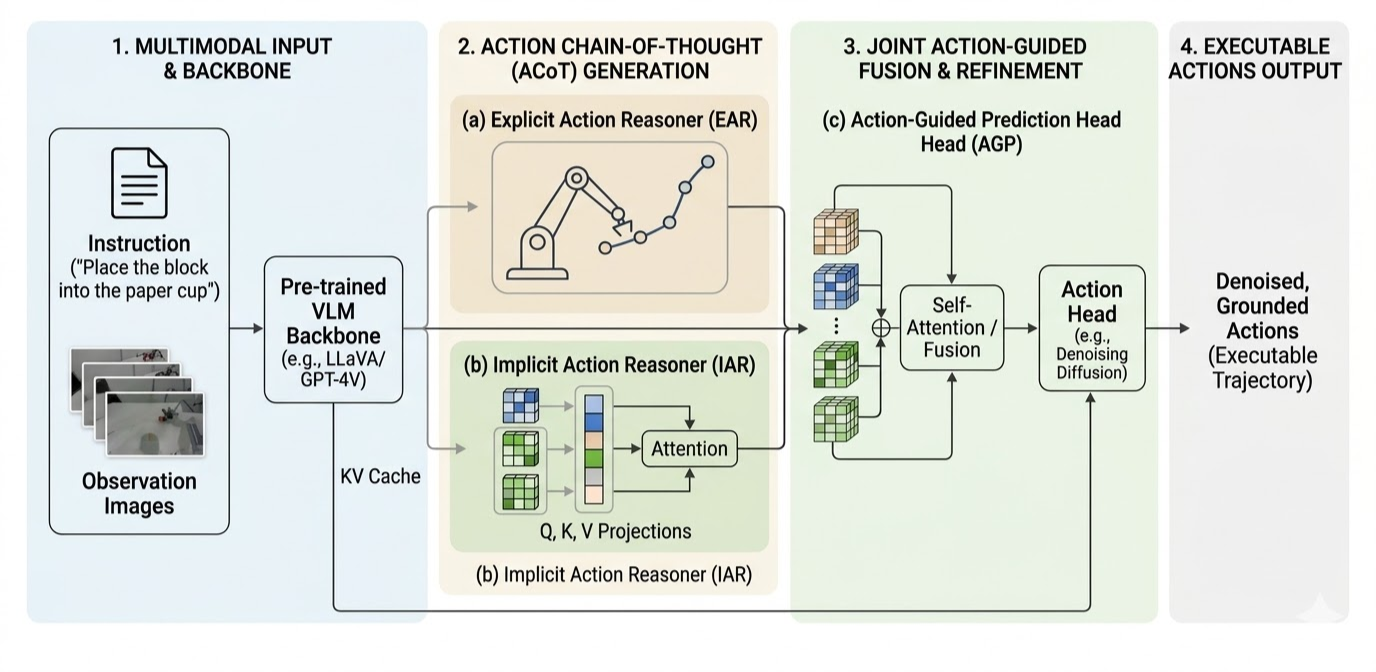

GO-2 performs reasoning utilizing an motion chain-of-thought. | Supply: AGIBOT

To attain the unity of reasoning and motion, mentioned AGIBOT, a system should remedy two key issues concurrently:

- Easy methods to generate “executable” motion plans by means of deep spatial reasoning

- How to make sure secure execution of these plans in actual environments

AGIBOT mentioned it addresses these by means of an structure constructed on two improvements. The primary is motion chain of thought. Not like conventional fashions that map directions on to uncooked motor instructions, GO-2 generates a high-level sequence of motion intents as a macro plan.

Much like how a human mentally simulates the arc of a basketball shot earlier than releasing the ball, GO-2 makes this course of specific. By action-level reasoning, the robotic plans an entire behavioral path and executes it step-by-step. Advanced duties are naturally decomposed into ordered phases, guaranteeing that execution is constructed upon a basis of clear, logical reasoning, defined AGIBOT.

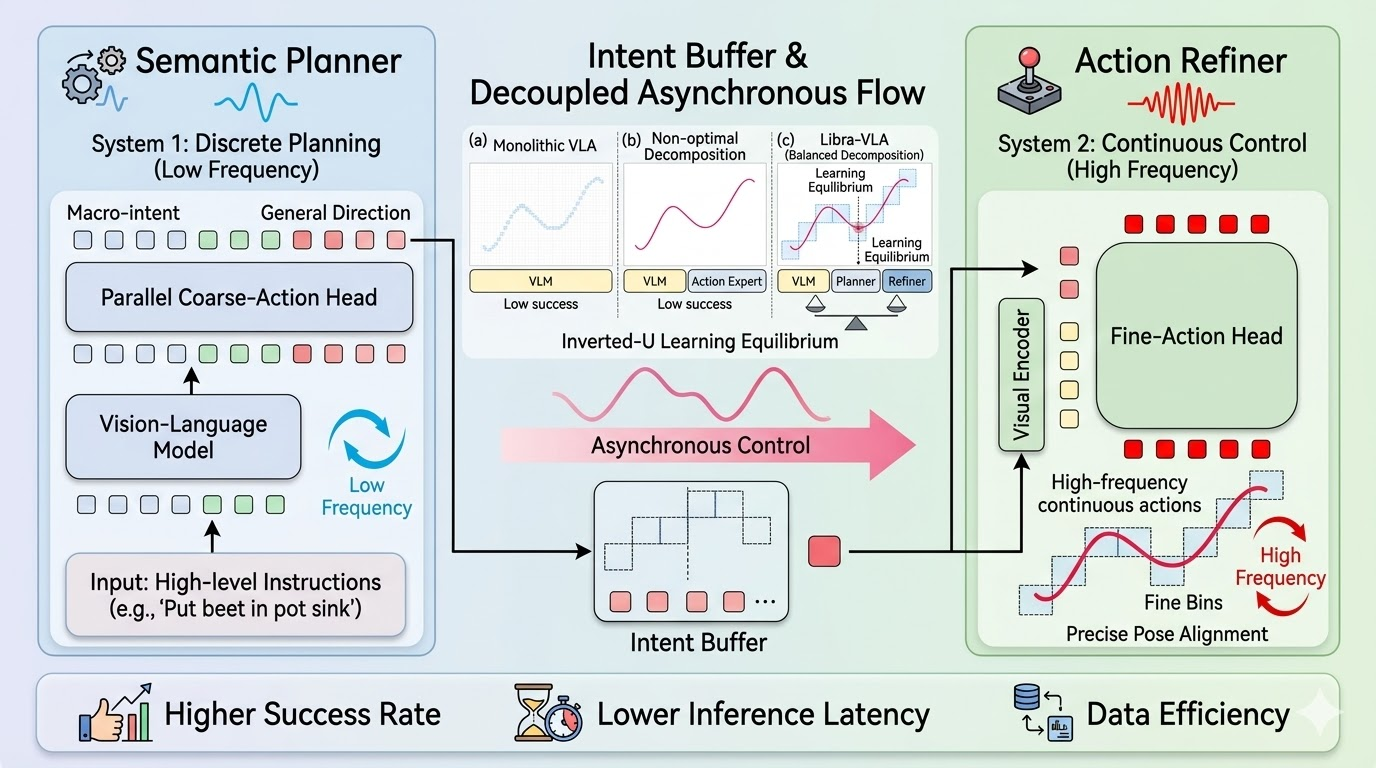

The second is asynchronous dual-system low-frequency planning, high-frequency following. The corporate mentioned high-level reasoning alone can not assure secure execution in real-world environments full of noise and disturbances.

To resolve this, GO-2 introduces an Asynchronous Twin-System structure to translate high-level reasoning into exact robotic actions. A semantic planning module operates at a decrease frequency, appearing as a “common commander.” This module generates structured high-level motion sequences. These are introduced by means of progressive refinement, guaranteeing that the reasoning itself is inherently “executable,” offering secure geometric anchors for management.

An action-following module, alternatively, operates at a better frequency. This acts as an “agile executor” that constantly receives high-level intents and combines them with real-time observations to generate particular management indicators, performing residual refinement to compensate for environmental noise.

AGIBOT mentioned these two techniques are deeply aligned. To make sure that execution strictly adheres to reasoning, GO-2 makes use of a teacher-forcing mechanism throughout coaching. It teaches the mannequin to carry out robustly even underneath “roughly right however imperfect” reasoning circumstances.

AGIBOT’s GO-2 makes use of a decoupled asynchronous move. | Supply: AGIBOT

GO-2 performs throughout completely different benchmarks

By bridging reasoning and motion, AGIBOT mentioned GO-2 achieves “a paradigm shift” in behavioral efficiency, considerably outperforming present mainstream fashions like π0.5 and NVIDIA GR00T:

- LIBERO benchmark: GO-2 ranks first throughout spatial, object, aim, and lengthy duties, with a median success charge of 98.5%.

- LIBERO-Plus benchmark: In environments with varied disturbances, GO-2 achieved an 86.6% zero-shot success charge.

- VLABench benchmark: In rigorous assessments for cross-category and texture generalization, GO-2 achieved a median rating of 47.4, notably outperforming current strategies in dealing with numerous object textures and unseen classes.

- Genie Sim 3.0 (Sim-to-Actual): Skilled solely on simulation knowledge, GO-2 achieved an 82.9% success charge in real-world testing.

From mannequin to deployment: enabling steady studying in the actual world

Past mannequin efficiency, AGIBOT mentioned it’s extending GO-2 into real-world deployment by means of a pre-training + post-training + knowledge suggestions loop paradigm. Built-in with Genie Studio, the system allows:

- Steady knowledge assortment throughout fleets of robots

- Cloud-based collaborative coaching

- On-line post-training in real-world environments

This infrastructure helps large-scale deployment and ongoing enchancment, mentioned the corporate. It will possibly assist hundreds of robots in distributed coaching and obtain round 10 occasions enchancment in coaching effectivity.

The mannequin may cut back job startup time to simply minutes, allow minute-level convergence in industrial duties, and improves charges by two to 4 occasions whereas decreasing knowledge necessities by over 50%.

This transforms GO-2 from a static mannequin right into a constantly evolving embodied system, in response to AGIBOT.

Editor’s observe: On the 2026 Robotics Summit & Expo on Might 27 and 28 in Boston, there shall be classes on embodied and bodily AI. Registration is now open.

AGIBOT strikes towards embodied brokers with reminiscence

Past secure execution, AgiBot is exploring the following frontier: can robots bear in mind and turn into smarter over time?

Its newest analysis introduces the OpenClaw Reminiscence System (arXiv:2603.11558), offering robots with long-term reminiscence to reuse reasoning traces from historic interactions.

By combining motion reasoning, hierarchical execution, and long-term reminiscence, AGIBOT mentioned it hopes to kind an entire clever loop: from notion to reasoning to motion to reminiscence.

The submit AGIBOT releases GO-2 basis mannequin for embodied AI appeared first on The Robotic Report.