AI-driven brute drive is an issue that’s solely getting worse. In keeping with a current examine, cyberattacks utilizing AI and ML have risen by 89% year-over-year as of early 2026, with round 11,000 assaults going down per second.

What’s extra, the know-how behind these assaults is barely enhancing, and so, whereas the amount rises, so too does the effectiveness with which these assaults bypass safety measures and exploit vulnerabilities.

Safety Via Fee Limiting

One of many methods through which these assaults are handled is called fee limiting. For these unaware, that is the method of controlling how regularly a consumer or system could make requests to a platform inside a set time period.

By setting thresholds for request frequency, it goals to stop attackers from overwhelming servers and successfully decelerate brute-force makes an attempt, and that is one thing many firms have adopted as the principle line of defence of their safety technique.

However there’s an issue: in a world the place AI-driven brute drive is very adaptive, there’s solely a lot that conventional fee limiting can do. Certainly, for groups seeking to stop brute force attacks, bot detection and mitigation have to be way more dynamic and clever, with real-time monitoring and behavioral evaluation to get to the basis of the menace.

The Drawback With Fee Limiting

Let’s say an attacker is utilizing a distributed community of hundreds of AI-powered bots, every assigned a singular IP and session. What’s extra, every bot operates slightly below the rate-limit threshold, sending requests in a sluggish, human-like sample that seems official in isolation.

As a result of fee limiting works by monitoring particular person IP addresses, accounts, and classes, and quickly blocking requests that exceed pre-set thresholds, no single bot on this state of affairs would set off the speed limiting, and but collectively, the community is performing tens of millions of actions concurrently.

In different phrases, the issue with fee limiting is that it assumes that malicious exercise originates from one or a number of sources performing too shortly – these sources are exceeding the allowed thresholds, and due to this fact they have to be abusive. AI-driven assaults, nevertheless, break this assumption.

By spreading exercise throughout an unlimited variety of sources and precisely mimicking human conduct within the course of, they’re basically evading conventional thresholds and mixing in with regular site visitors, which means the assault can proceed uninterrupted with none single IP or session being blocked.

What’s Fashionable Fee Limiting?

It’s not such a foul factor that fee limiting – in its conventional kind – is useless. If something, it’s to be anticipated. The world of cybersecurity is consistently evolving, and important cybersecurity practices which may have labored a number of years in the past don’t essentially stay efficient when the menace panorama is evolving at simply as speedy a tempo.

The problematic factor is that many firms nonetheless maintain on to it prefer it’s a silver bullet. In 2026, practically all main API suppliers and public-facing internet providers are utilizing fee limiting as a observe, with the strategy being practically common in enterprise-grade internet APIs, cloud platform safety, and SaaS merchandise.

In opposition to AI brute-force assaults, nevertheless, the cracks are inevitably going to point out, and that’s why it’s so necessary for safety groups to comprehend that there’s a higher possibility.

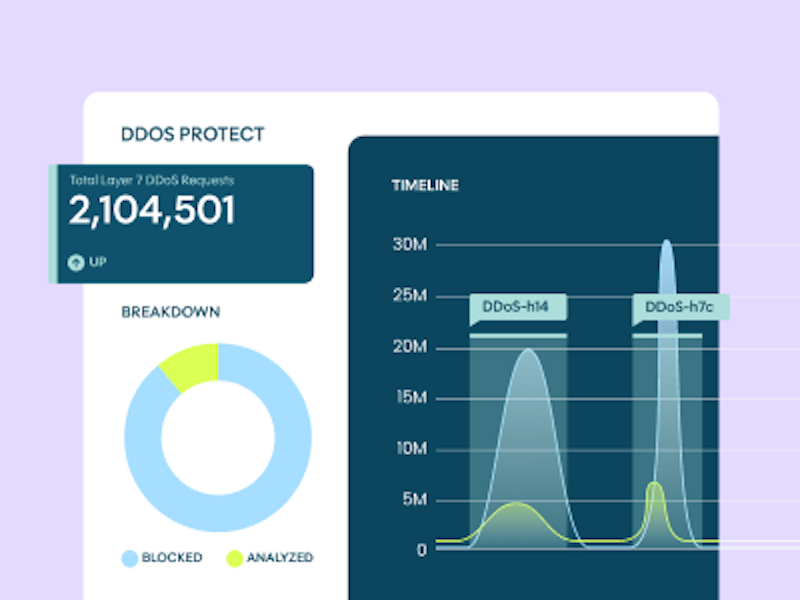

Fashionable fee limiting isn’t so totally different to conventional fee limiting, however the important thing distinction is that it permits you to outline customized response insurance policies primarily based on request quantity inside a time window – as an illustration, per hour or per day – and is built-in with AI-driven bot detection.

Which means when a distributed brute-force assault is underway, the place hundreds of bots are spreading requests throughout a number of IPs, the system can establish which requests are doubtless automated and throttle them selectively, relatively than blindly blocking site visitors primarily based on easy standards – importantly, this doesn’t simply shield your programs, it additionally helps optimize the shopper journey by guaranteeing actual clients acquire quick, uninterrupted entry to the platform, which is vital for maximizing conversions.

How Fashionable AI Bot Administration Works

It might do that by way of a number of key mechanisms:

Behavioral Evaluation

The place consumer interactions are monitored to detect automated conduct – a key cybersecurity time period to know right here is “behavioral biometrics”, whereby patterns like typing rhythm, mouse actions, and navigation velocity distinguish people from bots.

System Fingerprinting

The place a whole bunch of device-level attributes are collected to create a singular identifier.

Signature Detection

The place recognized bot frameworks and automation instruments are recognized by patterns in HTTP headers and TLS fingerprints.

Reputational Detection

The place IP addresses and information heart origins are evaluated towards historic menace information.

Intent Evaluation

The place the system distinguishes between benign automation and malicious actions.

Collectively, these mechanisms not solely work to establish and block AI brute-force assaults, however adapt repeatedly to new threats, successfully making a continuously shifting, evolving defence system that doesn’t develop out of date.

As we talked about beforehand, in a panorama the place attackers are extremely adaptive, this turns into much more necessary, however not simply this: it places management and perception again into the arms of the safety group.

You may determine what site visitors will get prioritized, and who will get restricted or blocked. You may see what assaults are being tried and what official exercise is happening. In different phrases, it turns an extraordinary defensive measure into an clever, proactive system that each protects and empowers those that use it.