Latency can pose security dangers in collaborative meeting cells. Supply: Cogniedge.ai

Cloud-based imaginative and prescient programs have improved industrial analytics and predictive upkeep, however they fall quick when real-time security and throughput matter most on the store ground. In high-mix collaborative meeting cells, even modest community latency can flip a promising human-robot collaboration (HRC) setup right into a stop-and-go bottleneck.

The business’s shift towards extra collaborative robots calls for greater than safer cages or slower speeds. It requires architectures that allow cobots dynamically adapt to human motion and fatigue whereas sustaining cycle time and security.

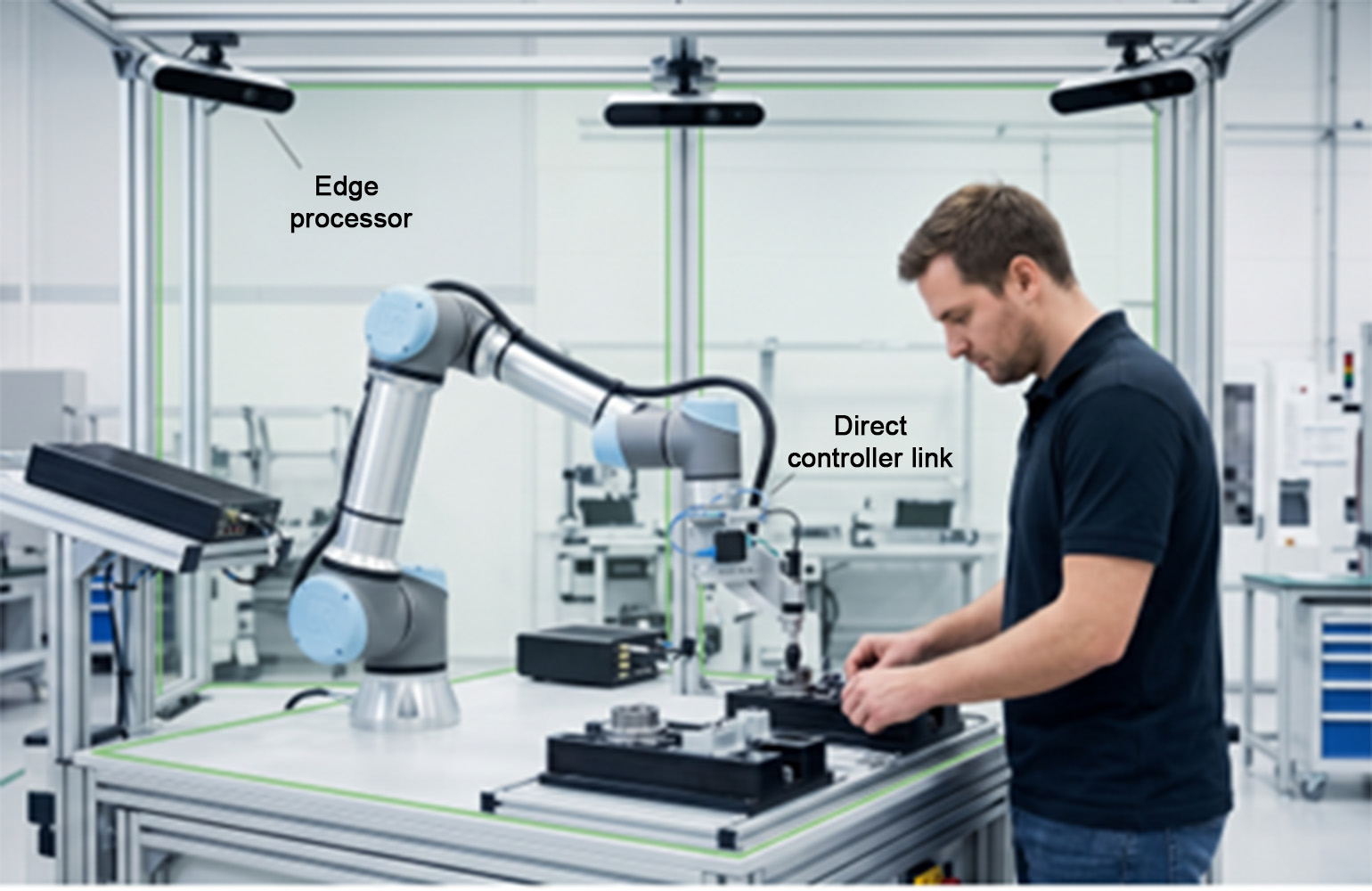

The hot button is shifting AI inference to the sting and establishing a direct, low-latency bridge from the sting processor straight to the robotic controller, bypassing the legacy PLC (programmable logic controller) for dynamic kinematic changes.

The physics of latency in pace and separation monitoring

Supply: Cogniedge.ai

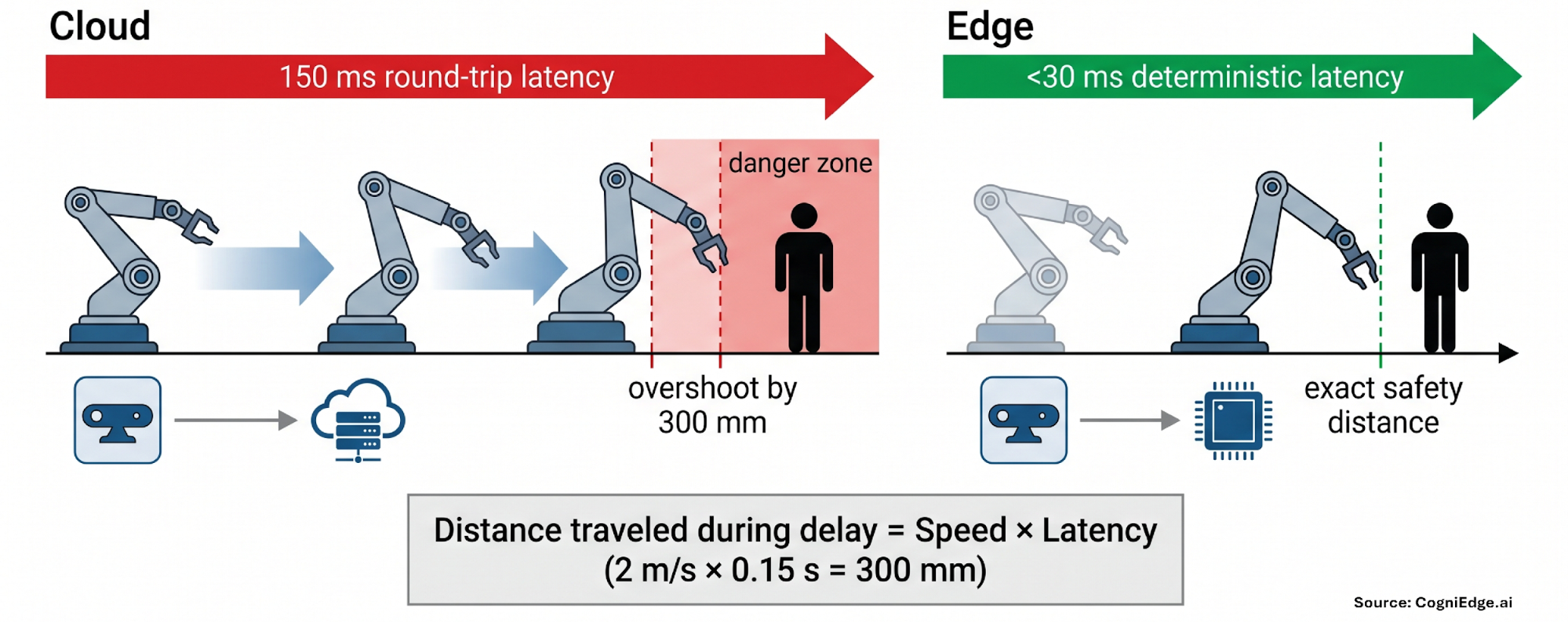

ISO/TS 15066 defines pace and separation monitoring (SSM) as a core security methodology for collaborative robots. The usual requires the robotic to keep up a protecting separation distance from the operator and scale back pace or cease if that distance is breached.

Contemplate a typical high-fidelity depth digicam feeding skeletal monitoring knowledge to a distant server. Spherical-trip latency, together with picture transmission, inference, and command return, generally ranges from 100 to 200 milliseconds.

At a reasonable arm pace of two m/s, the robotic travels 200 to 400 mm (7.8 to fifteen.7 in.) throughout that delay. In a compact collaborative cell, a 300 mm (11.8 in.) blind spot is the distinction between protected operation and potential damage.

To compensate, engineers widen security zones and program conservative speeds or frequent protecting stops. The result’s decreased throughput that defeats the aim of collaborative automation.

True real-time SSM in dynamic environments requires deterministic end-to-end latency beneath 30 ms—one thing that’s solely potential when processing happens millimeters from the sensor and the choice path connects on to the movement controller.

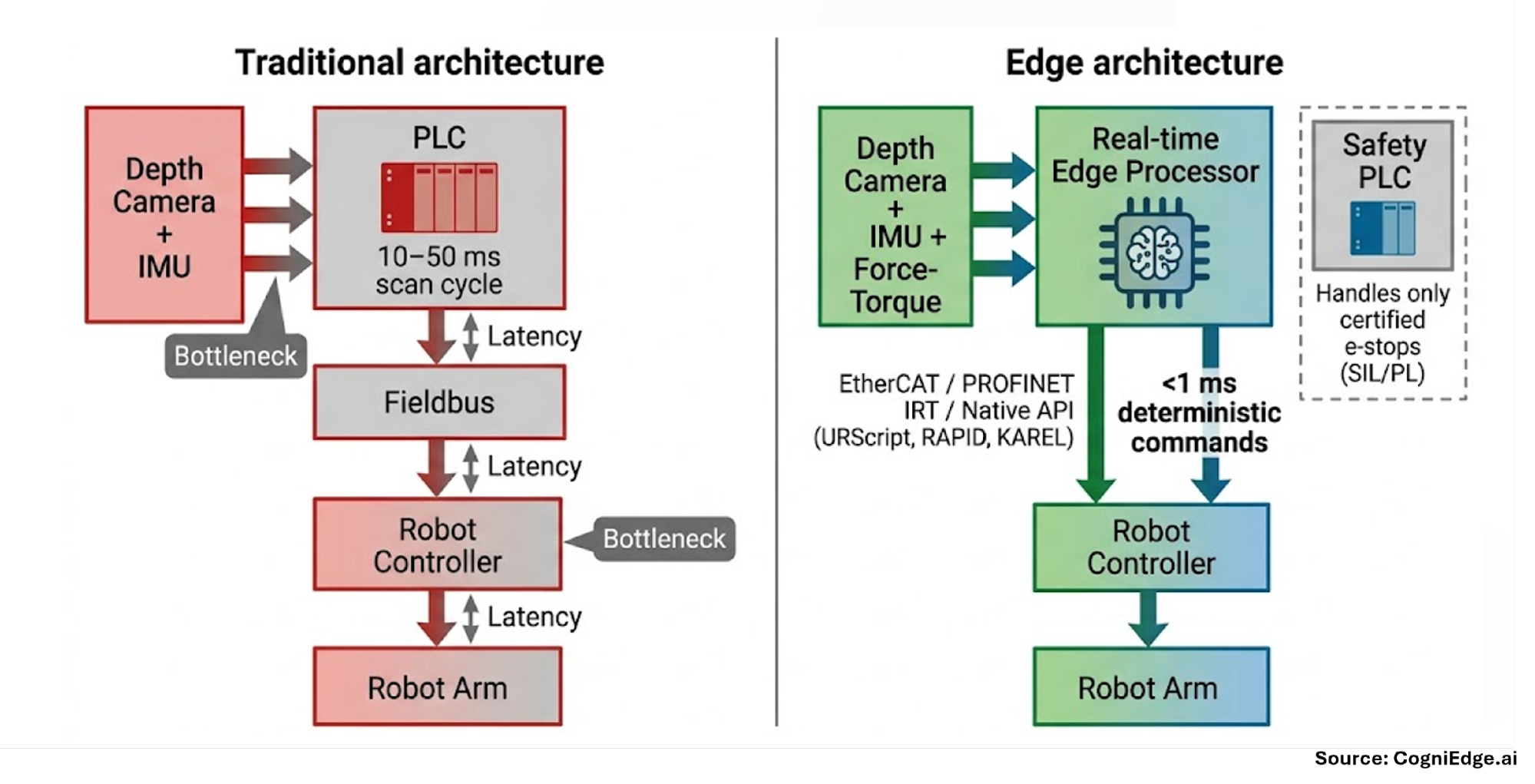

Why legacy PLCs create an unacceptable bottleneck

Most brownfield cells nonetheless depend on conventional PLCs for security logic. These units have been engineered for deterministic, discrete IO and scan cycles usually starting from 10 to 50 ms. They excel at studying a light-weight curtain or an e-stop however battle with the high-bandwidth, multidimensional knowledge streams coming from fashionable imaginative and prescient programs corresponding to skeletal monitoring, micro motion evaluation, and operator state estimation.

Routing edge AI inferences via the PLC provides one other full scan cycle plus fieldbus overhead. The cumulative delay destroys the determinism wanted for proactive SSM.

In follow, many integrators discover themselves compelled to run the robotic at decreased speeds or settle for frequent interruptions even when the AI is aware of the state of affairs is protected.

Supply: Cogniedge.ai

Constructing the direct edge-to-controller bridge

The answer is a localized real-time security processor that sits on the workcell and communicates instantly with the robotic controller, bypassing the PLC for non-safety-critical however time-sensitive changes.

This layer ingests multi-modal sensor knowledge (depth cameras, IMUs, force-torque sensors) on the edge, runs low-latency AI inference, and injects up to date instructions into the robotic’s movement planner by way of high-speed industrial protocols. Frequent implementation paths embrace:

- EtherCAT or PROFINET IRT for sub-millisecond deterministic cycles when the controller helps fieldbus extension.

- Actual-time UDP or native robotic APIs (URScript for Common Robots, RAPID for ABB, KAREL for FANUC) for direct socket communication to the movement controller.

The protection-rated PLC continues to deal with licensed emergency stops and SIL/PL-rated features. The sting processor acts as a parallel, high-speed channel that constantly updates trajectory, pace, and drive setpoints with out ready for the subsequent PLC scan. This “security coprocessor” structure maintains full compliance whereas enabling proactive conduct.

Adjusting kinematics on the fly in high-mix cells

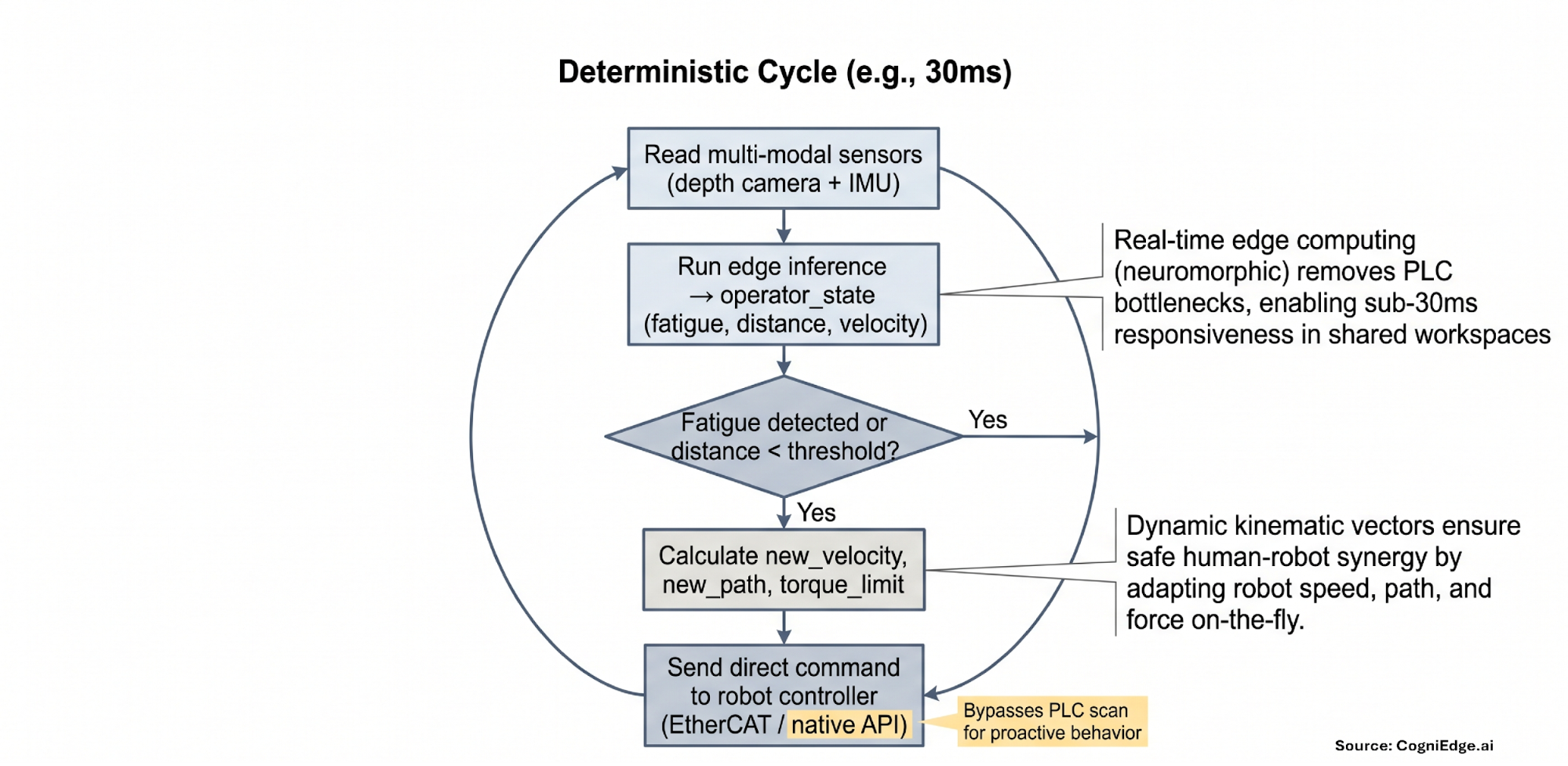

With the latency hole closed and a direct command path established, the cobot can transfer from reactive stopping to steady, adaptive collaboration.

In a high-mix meeting station, an operator’s actions might turn into slower or extra erratic towards the tip of a shift, which might be an early indicator of fatigue. The sting processor detects these micro deviations in actual time via skeletal monitoring and velocity profiling.

As an alternative of triggering a protecting cease, the system points rapid kinematic changes:

- Cut back most acceleration from 5 m/s² to 2 m/s².

- Widen the strategy angle by 15° to offer the operator more room.

- Decrease torque limits on strategy axes to cut back collision vitality.

This strategy retains the cell in steady movement. The robotic adapts its conduct to the human’s rapid state fairly than defaulting to a tough cease, preserving each security and productiveness. A simplified circulate chart illustration of the choice loop seems like this:

Supply: Cogniedge.ai

The {hardware} necessities for edge-first security for collaborative robots

Manufacturing unit flooring have restricted house and energy. Edge processors for this use case should function beneath 1 W whereas delivering real-time inference on temporal knowledge streams. Neuromorphic chips and Spiking Neural Networks (SNNs) are significantly properly suited as a result of they course of change detection and time-series knowledge with excessive effectivity and low latency.

These compact, fanless modules mount instantly in or close to the work cell, join by way of commonplace industrial Ethernet, and combine with present robotic controllers with out requiring new cupboards or main rewiring.

Sensible advantages for programs integrators

By implementing direct edge-to-controller architectures, the business can lastly ship on the last word promise of high-mix collaborative cells: fluid interplay that maintains takt time with out sacrificing security. This shift unlocks rapid worth throughout the complete manufacturing ecosystem.

For programs integrators, it provides a scalable strategy that works in brownfield environments, leverages commonplace protocols throughout robotic manufacturers, and preserves present investments in safety-rated PLCs. For producers, it protects the underside line by eliminating the frequent micro-stops that historically destroy cycle instances. Most significantly, for the operators engaged on the road, it creates a safer, fatigue-aware atmosphere the place the robotic acts as a real, responsive associate fairly than a inflexible machine.

As collaborative automation grows extra advanced, closing the latency loop on the controller degree would be the defining issue that separates profitable, high-throughput deployments from these restricted by legacy bottlenecks.

Concerning the writer

Madhu Gaganam is the founder and CEO of Cogniedge.ai and an engineering technologist with greater than 30 years of business automation expertise at corporations together with Rockwell Automation, Gartner, NXP, and Dell. A acknowledged business authority, he’s a High 10 Robotics Thought Chief on Thinkers360, co-chair of the Digital Twin Consortium, and an lively IEEE RAS member.

Madhu Gaganam is the founder and CEO of Cogniedge.ai and an engineering technologist with greater than 30 years of business automation expertise at corporations together with Rockwell Automation, Gartner, NXP, and Dell. A acknowledged business authority, he’s a High 10 Robotics Thought Chief on Thinkers360, co-chair of the Digital Twin Consortium, and an lively IEEE RAS member.

The put up Closing the latency hole: Why bodily AI requires edge-first architectures appeared first on The Robotic Report.